Mentioned in:

Most Anticipated: The Great Winter 2025 Preview

It's cold, it's grey, its bleak—but winter, at the very least, brings with it a glut of anticipation-inducing books. Here you’ll find nearly 100 titles that we’re excited to cozy up with this season. Some we’ve already read in galley form; others we’re simply eager to devour based on their authors, subjects, or blurbs. We'd love for you to find your next great read among them.

The Millions will be taking a hiatus for the next few months, but we hope to see you soon.

—Sophia Stewart, editor

January

The Legend of Kumai by Shirato Sanpei, tr. Richard Rubinger (Drawn & Quarterly)

The epic 10-volume series, a touchstone of longform storytelling in manga now published in English for the first time, follows outsider Kamui in 17th-century Japan as he fights his way up from peasantry to the prized role of ninja. —Michael J. Seidlinger

The Life of Herod the Great by Zora Neale Hurston (Amistad)

In the years before her death in 1960, Hurston was at work on what she envisioned as a continuation of her 1939 novel, Moses, Man of the Mountain. Incomplete, nearly lost to fire, and now published for the first time alongside scholarship from editor Deborah G. Plant, Hurston’s final manuscript reimagines Herod, villain of the New Testament Gospel accounts, as a magnanimous and beloved leader of First Century Judea. —Jonathan Frey

Mood Machine by Liz Pelly (Atria)

When you eagerly posted your Spotify Wrapped last year, did you notice how much of what you listened to tended to sound... the same? Have you seen all those links to Bandcamp pages your musician friends keep desperately posting in the hopes that maybe, just maybe, you might give them money for their art? If so, this book is for you. —John H. Maher

My Country, Africa by Andrée Blouin (Verso)

African revolutionary Blouin recounts a radical life steeped in activism in this vital autobiography, from her beginnings in a colonial orphanage to her essential role in the continent's midcentury struggles for decolonization. —Sophia M. Stewart

The First and Last King of Haiti by Marlene L. Daut (Knopf)

Donald Trump repeatedly directs extraordinary animus towards Haiti and Haitians. This biography of Henry Christophe—the man who played a pivotal role in the Haitian Revolution—might help Americans understand why. —Claire Kirch

The Bewitched Bourgeois by Dino Buzzati, tr. Lawrence Venuti (NYRB)

This is the second story collection, and fifth book, by the absurdist-leaning midcentury Italian writer—whose primary preoccupation was war novels that blend the brutal with the fantastical—to get the NYRB treatment. May it not be the last. —JHM

Y2K by Colette Shade (Dey Street)

The recent Y2K revival mostly makes me feel old, but Shade's essay collection deftly illuminates how we got here, connecting the era's social and political upheavals to today. —SMS

Darkmotherland by Samrat Upadhyay (Penguin)

In a vast dystopian reimagining of Nepal, Upadhyay braids narratives of resistance (political, personal) and identity (individual, societal) against a backdrop of natural disaster and state violence. The first book in nearly a decade from the Whiting Award–winning author of Arresting God in Kathmandu, this is Upadhyay’s most ambitious yet. —JF

Metamorphosis by Ross Jeffery (Truborn)

From the author of I Died Too, But They Haven’t Buried Me Yet, a woman leads a double life as she loses her grip on reality by choice, wearing a mask that reflects her inner demons, as she descends into a hell designed to reveal the innermost depths of her grief-stricken psyche. —MJS

The Containment by Michelle Adams (FSG)

Legal scholar Adams charts the failure of desegregation in the American North through the story of the struggle to integrate suburban schools in Detroit, which remained almost completely segregated nearly two decades after Brown v. Board. —SMS

Death of the Author by Nnedi Okorafor (Morrow)

African Futurist Okorafor’s book-within-a-book offers interchangeable cover images, one for the story of a disabled, Black visionary in a near-present day and the other for the lead character’s speculative posthuman novel, Rusted Robots. Okorafor deftly keeps the alternating chapters and timelines in conversation with one another. —Nathalie op de Beeck

Open Socrates by Agnes Callard (Norton)

Practically everything Agnes Callard says or writes ushers in a capital-D Discourse. (Remember that profile?) If she can do the same with a study of the philosophical world’s original gadfly, culture will be better off for it. —JHM

Aflame by Pico Iyer (Riverhead)

Presumably he finds time to eat and sleep in there somewhere, but it certainly appears as if Iyer does nothing but travel and write. His latest, following 2023’s The Half Known Life, makes a case for the sublimity, and necessity, of silent reflection. —JHM

The In-Between Bookstore by Edward Underhill (Avon)

A local bookstore becomes a literal portal to the past for a trans man who returns to his hometown in search of a fresh start in Underhill's tender debut. —SMS

Good Girl by Aria Aber (Hogarth)

Aber, an accomplished poet, turns to prose with a debut novel set in the electric excess of Berlin’s bohemian nightlife scene, where a young German-born Afghan woman finds herself enthralled by an expat American novelist as her country—and, soon, her community—is enflamed by xenophobia. —JHM

The Orange Eats Creeps by Grace Krilanovich (Two Dollar Radio)

Krilanovich’s 2010 cult classic, about a runaway teen with drug-fueled ESP who searches for her missing sister across surreal highways while being chased by a killer named Dactyl, gets a much-deserved reissue. —MJS

Mona Acts Out by Mischa Berlinski (Liveright)

In the latest novel from the National Book Award finalist, a 50-something actress reevaluates her life and career when #MeToo allegations roil the off-off-Broadway Shakespearean company that has cast her in the role of Cleopatra. —SMS

Something Rotten by Andrew Lipstein (FSG)

A burnt-out couple leave New York City for what they hope will be a blissful summer in Denmark when their vacation derails after a close friend is diagnosed with a rare illness and their marriage is tested by toxic influences. —MJS

The Sun Won't Come Out Tomorrow by Kristen Martin (Bold Type)

Martin's debut is a cultural history of orphanhood in America, from the 1800s to today, interweaving personal narrative and archival research to upend the traditional "orphan narrative," from Oliver Twist to Annie. —SMS

We Do Not Part by Han Kang, tr. E. Yaewon and Paige Aniyah Morris (Hogarth)

Kang’s Nobel win last year surprised many, but the consistency of her talent certainly shouldn't now. The latest from the author of The Vegetarian—the haunting tale of a Korean woman who sets off to save her injured friend’s pet at her home in Jeju Island during a deadly snowstorm—will likely once again confront the horrors of history with clear eyes and clarion prose. —JHM

We Are Dreams in the Eternal Machine by Deni Ellis Béchard (Milkweed)

As the conversation around emerging technology skews increasingly to apocalyptic and utopian extremes, Béchard’s latest novel adopts the heterodox-to-everyone approach of embracing complexity. Here, a cadre of characters is isolated by a rogue but benevolent AI into controlled environments engineered to achieve their individual flourishing. The AI may have taken over, but it only wants to best for us. —JF

The Harder I Fight the More I Love You by Neko Case (Grand Central)

Singer-songwriter Case, a country- and folk-inflected indie rocker and sometime vocalist for the New Pornographers, takes her memoir’s title from her 2013 solo album. Followers of PNW music scene chronicles like Kathleen Hanna’s Rebel Girl and drummer Steve Moriarty’s Mia Zapata and the Gits will consider Case’s backstory a must-read. —NodB

The Loves of My Life by Edmund White (Bloomsbury)

The 85-year-old White recounts six decades of love and sex in this candid and erotic memoir, crafting a landmark work of queer history in the process. Seminal indeed. —SMS

Blob by Maggie Su (Harper)

In Su’s hilarious debut, Vi Liu is a college dropout working a job she hates, nothing really working out in her life, when she stumbles across a sentient blob that she begins to transform as her ideal, perfect man that just might resemble actor Ryan Gosling. —MJS

Sinkhole and Other Inexplicable Voids by Leyna Krow (Penguin)

Krow’s debut novel, Fire Season, traced the combustible destinies of three Northwest tricksters in the aftermath of an 1889 wildfire. In her second collection of short fiction, Krow amplifies surreal elements as she tells stories of ordinary lives. Her characters grapple with deadly viruses, climate change, and disasters of the Anthropocene’s wilderness. —NodB

Black in Blues by Imani Perry (Ecco)

The National Book Award winner—and one of today's most important thinkers—returns with a masterful meditation on the color blue and its role in Black history and culture. —SMS

Too Soon by Betty Shamieh (Avid)

The timely debut novel by Shamieh, a playwright, follows three generations of Palestinian American women as they navigate war, migration, motherhood, and creative ambition. —SMS

How to Talk About Love by Plato, tr. Armand D'Angour (Princeton UP)

With modern romance on its last legs, D'Angour revisits Plato's Symposium, mining the philosopher's masterwork for timeless, indispensable insights into love, sex, and attraction. —SMS

At Dark, I Become Loathsome by Eric LaRocca (Blackstone)

After Ashley Lutin’s wife dies, he takes the grieving process in a peculiar way, posting online, “If you're reading this, you've likely thought that the world would be a better place without you,” and proceeds to offer a strange ritual for those that respond to the line, equally grieving and lost, in need of transcendence. —MJS

February

No One Knows by Osamu Dazai, tr. Ralph McCarthy (New Directions)

A selection of stories translated in English for the first time, from across Dazai’s career, demonstrates his penchant for exploring conformity and society’s often impossible expectations of its members. —MJS

Mutual Interest by Olivia Wolfgang-Smith (Bloomsbury)

This queer love story set in post–Gilded Age New York, from the author of Glassworks (and one of my favorite Millions essays to date), explores on sex, power, and capitalism through the lives of three queer misfits. —SMS

Pure, Innocent Fun by Ira Madison III (Random House)

This podcaster and pop culture critic spoke to indie booksellers at a fall trade show I attended, regaling us with key cultural moments in the 1990s that shaped his youth in Milwaukee and being Black and gay. If the book is as clever and witty as Madison is, it's going to be a winner. —CK

Gliff by Ali Smith (Pantheon)

The Scottish author has been on the scene since 1997 but is best known today for a seasonal quartet from the late twenty-teens that began in 2016 with Autumn and ended in 2020 with Summer. Here, she takes the genre turn, setting two children and a horse loose in an authoritarian near future. —JHM

Land of Mirrors by Maria Medem, tr. Aleshia Jensen and Daniela Ortiz (D&Q)

This hypnotic graphic novel from one of Spain's most celebrated illustrators follows Antonia, the sole inhabitant of a deserted town, on a color-drenched quest to preserve the dying flower that gives her purpose. —SMS

Bibliophobia by Sarah Chihaya (Random House)

As odes to the "lifesaving power of books" proliferate amid growing literary censorship, Chihaya—a brilliant critic and writer—complicates this platitude in her revelatory memoir about living through books and the power of reading to, in the words of blurber Namwali Serpell, "wreck and redeem our lives." —SMS

Reading the Waves by Lidia Yuknavitch (Riverhead)

Yuknavitch continues the personal story she began in her 2011 memoir, The Chronology of Water. More than a decade after that book, and nearly undone by a history of trauma and the death of her daughter, Yuknavitch revisits the solace she finds in swimming (she was once an Olympic hopeful) and in her literary community. —NodB

The Dissenters by Youssef Rakha (Graywolf)

A son reevaluates the life of his Egyptian mother after her death in Rakha's novel. Recounting her sprawling life story—from her youth in 1960s Cairo to her experience of the 2011 Tahrir Square protests—a vivid portrait of faith, feminism, and contemporary Egypt emerges. —SMS

Tetra Nova by Sophia Terazawa (Deep Vellum)

Deep Vellum has a particularly keen eye for fiction in translation that borders on the unclassifiable. This debut from a poet the press has published twice, billed as the story of “an obscure Roman goddess who re-imagines herself as an assassin coming to terms with an emerging performance artist identity in the late-20th century,” seems right up that alley. —JHM

David Lynch's American Dreamscape by Mike Miley (Bloomsbury)

Miley puts David Lynch's films in conversation with literature and music, forging thrilling and unexpected connections—between Eraserhead and "The Yellow Wallpaper," Inland Empire and "mixtape aesthetics," Lynch and the work of Cormac McCarthy. Lynch devotees should run, not walk. —SMS

There's No Turning Back by Alba de Céspedes, tr. Ann Goldstein (Washington Square)

Goldstein is an indomitable translator. Without her, how would you read Ferrante? Here, she takes her pen to a work by the great Cuban-Italian writer de Céspedes, banned in the fascist Italy of the 1930s, that follows a group of female literature students living together in a Roman boarding house. —JHM

Beta Vulgaris by Margie Sarsfield (Norton)

Named for the humble beet plant and meaning, in a rough translation from the Latin, "vulgar second," Sarsfield’s surreal debut finds a seasonal harvest worker watching her boyfriend and other colleagues vanish amid “the menacing but enticing siren song of the beets.” —JHM

People From Oetimu by Felix Nesi, tr. Lara Norgaard (Archipelago)

The center of Nesi’s wide-ranging debut novel is a police station on the border between East and West Timor, where a group of men have gathered to watch the final of the 1998 World Cup while a political insurgency stirs without. Nesi, in English translation here for the first time, circles this moment broadly, reaching back to the various colonialist projects that have shaped Timor and the lives of his characters. —JF

Brother Brontë by Fernando A. Flores (MCD)

This surreal tale, set in a 2038 dystopian Texas is a celebration of resistance to authoritarianism, a mash-up of Olivia Butler, Ray Bradbury, and John Steinbeck. —CK

Alligator Tears by Edgar Gomez (Crown)

The High-Risk Homosexual author returns with a comic memoir-in-essays about fighting for survival in the Sunshine State, exploring his struggle with poverty through the lens of his queer, Latinx identity. —SMS

Theory & Practice by Michelle De Kretser (Catapult)

This lightly autofictional novel—De Krester's best yet, and one of my favorite books of this year—centers on a grad student's intellectual awakening, messy romantic entanglements, and fraught relationship with her mother as she minds the gap between studying feminist theory and living a feminist life. —SMS

The Lamb by Lucy Rose (Harper)

Rose’s cautionary and caustic folk tale is about a mother and daughter who live alone in the forest, quiet and tranquil except for the visitors the mother brings home, whom she calls “strays,” wining and dining them until they feast upon the bodies. —MJS

Disposable by Sarah Jones (Avid)

Jones, a senior writer for New York magazine, gives a voice to America's most vulnerable citizens, who were deeply and disproportionately harmed by the pandemic—a catastrophe that exposed the nation's disregard, if not outright contempt, for its underclass. —SMS

No Fault by Haley Mlotek (Viking)

Written in the aftermath of the author's divorce from the man she had been with for 12 years, this "Memoir of Romance and Divorce," per its subtitle, is a wise and distinctly modern accounting of the end of a marriage, and what it means on a personal, social, and literary level. —SMS

Enemy Feminisms by Sophie Lewis (Haymarket)

Lewis, one of the most interesting and provocative scholars working today, looks at certain malignant strains of feminism that have done more harm than good in her latest book. In the process, she probes the complexities of gender equality and offers an alternative vision of a feminist future. —SMS

Lion by Sonya Walger (NYRB)

Walger—an successful actor perhaps best known for her turn as Penny Widmore on Lost—debuts with a remarkably deft autobiographical novel (published by NYRB no less!) about her relationship with her complicated, charismatic Argentinian father. —SMS

The Voices of Adriana by Elvira Navarro, tr. Christina MacSweeney (Two Lines)

A Spanish writer and philosophy scholar grieves her mother and cares for her sick father in Navarro's innovative, metafictional novel. —SMS

Autotheories ed. Alex Brostoff and Vilashini Cooppan (MIT)

Theory wonks will love this rigorous and surprisingly playful survey of the genre of autotheory—which straddles autobiography and critical theory—with contributions from Judith Butler, Jamieson Webster, and more.

Fagin the Thief by Allison Epstein (Doubleday)

I enjoy retellings of classic novels by writers who turn the spotlight on interesting minor characters. This is an excursion into the world of Charles Dickens, told from the perspective iconic thief from Oliver Twist. —CK

Crush by Ada Calhoun (Viking)

Calhoun—the masterful memoirist behind the excellent Also A Poet—makes her first foray into fiction with a debut novel about marriage, sex, heartbreak, all-consuming desire. —SMS

Show Don't Tell by Curtis Sittenfeld (Random House)

Sittenfeld's observations in her writing are always clever, and this second collection of short fiction includes a tale about the main character in Prep, who visits her boarding school decades later for an alumni reunion. —CK

Right-Wing Woman by Andrea Dworkin (Picador)

One in a trio of Dworkin titles being reissued by Picador, this 1983 meditation on women and American conservatism strikes a troublingly resonant chord in the shadow of the recent election, which saw 45% of women vote for Trump. —SMS

The Talent by Daniel D'Addario (Scout)

If your favorite season is awards, the debut novel from D'Addario, chief correspondent at Variety, weaves an awards-season yarn centering on five stars competing for the Best Actress statue at the Oscars. If you know who Paloma Diamond is, you'll love this. —SMS

Death Takes Me by Cristina Rivera Garza, tr. Sarah Booker and Robin Myers (Hogarth)

The Pulitzer winner’s latest is about an eponymously named professor who discovers the body of a mutilated man with a bizarre poem left with the body, becoming entwined in the subsequent investigation as more bodies are found. —MJS

The Strange Case of Jane O. by Karen Thompson Walker (Random House)

Jane goes missing after a sudden a debilitating and dreadful wave of symptoms that include hallucinations, amnesia, and premonitions, calling into question the foundations of her life and reality, motherhood and buried trauma. —MJS

Song So Wild and Blue by Paul Lisicky (HarperOne)

If it weren’t Joni Mitchell’s world with all of us just living in it, one might be tempted to say the octagenarian master songstress is having a moment: this memoir of falling for the blue beauty of Mitchell’s work follows two other inventive books about her life and legacy: Ann Powers's Traveling and Henry Alford's I Dream of Joni. —JHM

Mornings Without Mii by Mayumi Inaba, tr. Ginny Tapley (FSG)

A woman writer meditates on solitude, art, and independence alongside her beloved cat in Inaba's modern classic—a book so squarely up my alley I'm somehow embarrassed. —SMS

True Failure by Alex Higley (Coffee House)

When Ben loses his job, he decides to pretend to go to work while instead auditioning for Big Shot, a popular reality TV show that he believes might be a launchpad for his future successes. —MJS

March

Woodworking by Emily St. James (Crooked Reads)

Those of us who have been reading St. James since the A.V. Club days may be surprised to see this marvelous critic's first novel—in this case, about a trans high school teacher befriending one of her students, the only fellow trans woman she’s ever met—but all the more excited for it. —JHM

Optional Practical Training by Shubha Sunder (Graywolf)

Told as a series of conversations, Sunder’s debut novel follows its recently graduated Indian protagonist in 2006 Cambridge, Mass., as she sees out her student visa teaching in a private high school and contriving to find her way between worlds that cannot seem to comprehend her. Quietly subversive, this is an immigration narrative to undermine the various reductionist immigration narratives of our moment. —JF

Love, Queenie by Mayukh Sen (Norton)

Merle Oberon, one of Hollywood's first South Asian movie stars, gets her due in this engrossing biography, which masterfully explores Oberon's painful upbringing, complicated racial identity, and much more. —SMS

The Age of Choice by Sophia Rosenfeld (Princeton UP)

At a time when we are awash with options—indeed, drowning in them—Rosenfeld's analysis of how our modingn idea of "freedom" became bound up in the idea of personal choice feels especially timely, touching on everything from politics to romance. —SMS

Sucker Punch by Scaachi Koul (St. Martin's)

One of the internet's funniest writers follows up One Day We'll All Be Dead and None of This Will Matter with a sharp and candid collection of essays that sees her life go into a tailspin during the pandemic, forcing her to reevaluate her beliefs about love, marriage, and what's really worth fighting for. —SMS

The Mysterious Disappearance of the Marquise of Loria by José Donoso, tr. Megan McDowell (New Directions)

The ever-excellent McDowell translates yet another work by the influential Chilean author for New Directions, proving once again that Donoso had a knack for titles: this one follows up 2024’s behemoth The Obscene Bird of Night. —JHM

Remember This by Anthony Giardina (FSG)

On its face, it’s another book about a writer living in Brooklyn. A layer deeper, it’s a book about fathers and daughters, occupations and vocations, ethos and pathos, failure and success. —JHM

Ultramarine by Mariette Navarro (Deep Vellum)

In this metaphysical and lyrical tale, a captain known for sticking to protocol begins losing control not only of her crew and ship but also her own mind. —MJS

We Tell Ourselves Stories by Alissa Wilkinson (Liveright)

Amid a spate of new books about Joan Didion published since her death in 2021, this entry by Wilkinson (one of my favorite critics working today) stands out for its approach, which centers Hollywood—and its meaning-making apparatus—as an essential key to understanding Didion's life and work. —SMS

Seven Social Movements that Changed America by Linda Gordon (Norton)

This book—by a truly renowned historian—about the power that ordinary citizens can wield when they organize to make their community a better place for all could not come at a better time. —CK

Mothers and Other Fictional Characters by Nicole Graev Lipson (Chronicle Prism)

Lipson reconsiders the narratives of womanhood that constrain our lives and imaginations, mining the canon for alternative visions of desire, motherhood, and more—from Kate Chopin and Gwendolyn Brooks to Philip Roth and Shakespeare—to forge a new story for her life. —SMS

Goddess Complex by Sanjena Sathian (Penguin)

Doppelgängers have been done to death, but Sathian's examination of Millennial womanhood—part biting satire, part twisty thriller—breathes new life into the trope while probing the modern realities of procreation, pregnancy, and parenting. —SMS

Stag Dance by Torrey Peters (Random House)

The author of Detransition, Baby offers four tales for the price of one: a novel and three stories that promise to put gender in the crosshairs with as sharp a style and swagger as Peters’ beloved latest. The novel even has crossdressing lumberjacks. —JHM

On Breathing by Jamieson Webster (Catapult)

Webster, a practicing psychoanalyst and a brilliant writer to boot, explores that most basic human function—breathing—to address questions of care and interdependence in an age of catastrophe. —SMS

Unusual Fragments: Japanese Stories (Two Lines)

The stories of Unusual Fragments, including work by Yoshida Tomoko, Nobuko Takai, and other seldom translated writers from the same ranks as Abe and Dazai, comb through themes like alienation and loneliness, from a storm chaser entering the eye of a storm to a medical student observing a body as it is contorted into increasingly violent positions. —MJS

The Antidote by Karen Russell (Knopf)

Russell has quipped that this Dust Bowl story of uncanny happenings in Nebraska is the “drylandia” to her 2011 Florida novel, Swamplandia! In this suspenseful account, a woman working as a so-called prairie witch serves as a storage vault for her townspeople’s most troubled memories of migration and Indigenous genocide. With a murderer on the loose, a corrupt sheriff handling the investigation, and a Black New Deal photographer passing through to document Americana, the witch loses her memory and supernatural events parallel the area’s lethal dust storms. —NodB

On the Clock by Claire Baglin, tr. Jordan Stump (New Directions)

Baglin's bildungsroman, translated from the French, probes the indignities of poverty and service work from the vantage point of its 20-year-old narrator, who works at a fast-food joint and recalls memories of her working-class upbringing. —SMS

Motherdom by Alex Bollen (Verso)

Parenting is difficult enough without dealing with myths of what it means to be a good mother. I who often felt like a failure as a mother appreciate Bollen's focus on a more realistic approach to parenting. —CK

The Magic Books by Anne Lawrence-Mathers (Yale UP)

For that friend who wants to concoct the alchemical elixir of life, or the person who cannot quit Susanna Clark’s Jonathan Strange and Mr. Norrell, Lawrence-Mathers collects 20 illuminated medieval manuscripts devoted to magical enterprise. Her compendium includes European volumes on astronomy, magical training, and the imagined intersection between science and the supernatural. —NodB

Theft by Abdulrazak Gurnah (Riverhead)

The first novel by the Tanzanian-British Nobel laureate since his surprise win in 2021 is a story of class, seismic cultural change, and three young people in a small Tanzania town, caught up in both as their lives dramatically intertwine. —JHM

Twelve Stories by American Women, ed. Arielle Zibrak (Penguin Classics)

Zibrak, author of a delicious volume on guilty pleasures (and a great essay here at The Millions), curates a dozen short stories by women writers who have long been left out of American literary canon—most of them women of color—from Frances Ellen Watkins Harper to Zitkala-Ša. —SMS

I'll Love You Forever by Giaae Kwon (Holt)

K-pop’s sky-high place in the fandom landscape made a serious critical assessment inevitable. This one blends cultural criticism with memoir, using major artists and their careers as a lens through which to view the contemporary Korean sociocultural landscape writ large. —JHM

The Buffalo Hunter Hunter by Stephen Graham Jones (Saga)

Jones, the acclaimed author of The Only Good Indians and the Indian Lake Trilogy, offers a unique tale of historical horror, a revenge tale about a vampire descending upon the Blackfeet reservation and the manifold of carnage in their midst. —MJS

True Mistakes by Lena Moses-Schmitt (University of Arkansas Press)

Full disclosure: Lena is my friend. But part of why I wanted to be her friend in the first place is because she is a brilliant poet. Selected by Patricia Smith as a finalist for the Miller Williams Poetry Prize, and blurbed by the great Heather Christle and Elisa Gabbert, this debut collection seeks to turn "mistakes" into sites of possibility. —SMS

Perfection by Vicenzo Latronico, tr. Sophie Hughes (NYRB)

Anna and Tom are expats living in Berlin enjoying their freedom as digital nomads, cultivating their passion for capturing perfect images, but after both friends and time itself moves on, their own pocket of creative freedom turns boredom, their life trajectories cast in doubt. —MJS

Guatemalan Rhapsody by Jared Lemus (Ecco)

Jemus's debut story collection paint a composite portrait of the people who call Guatemala home—and those who have left it behind—with a cast of characters that includes a medicine man, a custodian at an underfunded college, wannabe tattoo artists, four orphaned brothers, and many more.

Pacific Circuit by Alexis Madrigal (MCD)

The Oakland, Calif.–based contributing writer for the Atlantic digs deep into the recent history of a city long under-appreciated and under-served that has undergone head-turning changes throughout the rise of Silicon Valley. —JHM

Barbara by Joni Murphy (Astra)

Described as "Oppenheimer by way of Lucia Berlin," Murphy's character study follows the titular starlet as she navigates the twinned convulsions of Hollywood and history in the Atomic Age.

Sister Sinner by Claire Hoffman (FSG)

This biography of the fascinating Aimee Semple McPherson, America's most famous evangelist, takes religion, fame, and power as its subjects alongside McPherson, whose life was suffused with mystery and scandal. —SMS

Trauma Plot by Jamie Hood (Pantheon)

In this bold and layered memoir, Hood confronts three decades of sexual violence and searches for truth among the wreckage. Kate Zambreno calls Trauma Plot the work of "an American Annie Ernaux." —SMS

Hey You Assholes by Kyle Seibel (Clash)

Seibel’s debut story collection ranges widely from the down-and-out to the downright bizarre as he examines with heart and empathy the strife and struggle of his characters. —MJS

James Baldwin by Magdalena J. Zaborowska (Yale UP)

Zaborowska examines Baldwin's unpublished papers and his material legacy (e.g. his home in France) to probe about the great writer's life and work, as well as the emergence of the "Black queer humanism" that Baldwin espoused. —CK

Stop Me If You've Heard This One by Kristen Arnett (Riverhead)

Arnett is always brilliant and this novel about the relationship between Cherry, a professional clown, and her magician mentor, "Margot the Magnificent," provides a fascinating glimpse of the unconventional lives of performance artists. —CK

Paradise Logic by Sophie Kemp (S&S)

The deal announcement describes the ever-punchy writer’s debut novel with an infinitely appealing appellation: “debauched picaresque.” If that’s not enough to draw you in, the truly unhinged cover should be. —JHM

[millions_email]

A Year in Reading: 2024

Welcome to the 20th (!) installment of The Millions' annual Year in Reading series, which gathers together some of today's most exciting writers and thinkers to share the books that shaped their year. YIR is not a collection of yearend best-of lists; think of it, perhaps, as an assemblage of annotated bibliographies. We've invited contributors to reflect on the books they read this year—an intentionally vague prompt—and encouraged them to approach the assignment however they choose.

In writing about our reading lives, as YIR contributors are asked to do, we inevitably write about our personal lives, our inner lives. This year, a number of contributors read their way through profound grief and serious illness, through new parenthood and cross-country moves. Some found escape in frothy romances, mooring in works of theology, comfort in ancient epic poetry. More than one turned to the wisdom of Ursula K. Le Guin. Many describe a book finding them just when they needed it.

Interpretations of the assignment were wonderfully varied. One contributor, a music critic, considered the musical analogs to the books she read, while another mapped her reads from this year onto constellations. Most people's reading was guided purely by pleasure, or else a desire to better understand events unfolding in their lives or larger the world. Yet others centered their reading around a certain sense of duty: this year one contributor committed to finishing the six Philip Roth novels he had yet to read, an undertaking that he likens to “eating a six-pack of paper towels.” (Lucky for us, he included in his essay his final ranking of Roth's oeuvre.)

The books that populate these essays range widely, though the most commonly noted title this year was Tony Tulathimutte’s story collection Rejection. The work of newly minted National Book Award winner Percival Everett, particularly his acclaimed novel James, was also widely read and written about. And as the genocide of Palestinians in Gaza enters its second year, many contributors sought out Isabella Hammad’s searing, clear-eyed essay Recognizing the Stranger.

Like so many endeavors in our chronically under-resourced literary community, Year in Reading is a labor of love. The Millions is a one-person editorial operation (with an invaluable assist from SEO maven Dani Fishman), and producing YIR—and witnessing the joy it brings contributors and readers alike—has been the highlight of my tenure as editor. I’m profoundly grateful for the generosity of this year’s contributors, whose names and entries will be revealed below over the next three weeks, concluding on Wednesday, December 18. Be sure to subscribe to The Millions’ free newsletter to get the week’s entries sent straight to your inbox each Friday.

—Sophia Stewart, editor

Becca Rothfeld, author of All Things Are Too Small

Carvell Wallace, author of Another Word for Love

Charlotte Shane, author of An Honest Woman

Brianna Di Monda, writer and editor

Nell Irvin Painter, author of I Just Keep Talking

Carrie Courogen, author of Miss May Does Not Exist

Ayşegül Savaş, author of The Anthropologists

Zachary Issenberg, writer

Tony Tulathimutte, author of Rejection

Ann Powers, author of Traveling: On the Path of Joni Mitchell

Lidia Yuknavitch, author of Reading the Waves

Nicholas Russell, writer and critic

Daniel Saldaña París, author of Planes Flying Over a Monster

Lili Anolik, author of Didion and Babitz

Deborah Ghim, editor

Emily Witt, author of Health and Safety

Nathan Thrall, author of A Day in the Life of Abed Salama

Lena Moses-Schmitt, author of True Mistakes

Jeremy Gordon, author of See Friendship

John Lee Clark, author of Touch the Future

Ellen Wayland-Smith, author of The Science of Last Things

Edwin Frank, publisher and author of Stranger Than Fiction

Sophia Stewart, editor of The Millions

A Year in Reading Archives: 2023, 2022, 2021, 2020, 2019, 2018, 2017, 2016, 2015, 2014, 2013, 2011, 2010, 2009, 2008, 2007, 2006, 2005

Draw Hard

Harold and the Purple Crayon is a classic children’s book. Is it also a writing guide? In an essay for Bookslut, Mairead Case explains why she re-reads it whenever she’s finishing a project: the main character’s need to create a room for himself is a corollary to the writing process.

Fixed by Camel: On Gender, Books, and Children

1.

Count weather among the forces that I move through life without fully understanding. On a recent frigid Saturday, a sharp chill hunted my joints through worn thermals and cheap gloves. Forget grocery shopping, not with the stroller, not in this cold. My 16-month-old son and I retreated to the library. More than basmati rice or cauliflower, we needed open space, the familiar thick carpet where he could squat and squeal freely. We needed the warm light of enormous lampshades embossed with ants, birds, and humpback whales. We needed more books.

Maybe that should read "wanted." My son hadn’t tired of Good Dog, Carl or My Friends. He’d started requesting Tickle, Tickle by name. His mother invoked Knuffle Bunny while he handed her laundry, and Brush Your Teeth, Please had helped me transform a grim chore into something like dessert. (Grape-flavored toothpaste deserves some credit here). For weeks, maybe months, books had reliably engaged him, exciting or calming him depending on the title, the time of day, and what could be called his nascent taste. A rotation of Goodnight, Moon, Good Night, Gorilla, and Goodnight, Goodnight, Construction Site guided him to sleep most nights. Our afternoons sometimes focused on books of a certain theme: bunnies, oceans, dogs, primates. Reading was becoming a force that shaped his world, but unlike a polar vortex or a hurricane, it felt like a force within parental control. Three weeks later, our borrowed books due, I am less sure. I can control the presence or absence of books. I can curate his library. I can open the book and ask him “Where is the bird?” After that, mystery follows. Once the words leave my mouth for his ears and brain, they enter a universe where nothing is fixed.

This particular trip to the library marked the end of an eventful week in his early literacy. At bedtime the previous Sunday, he had restacked his books to recover a strategically buried title, Trains Go. My son sat in his typical way, circling once and bracing with both hands before thudding down. (“I learned to sit from my friend the dog...”) He laughed, looking like a tiny John Belushi. In this moment, I saw a flash of what I’ve heard other parents call the Boy-Boy, a term I understand but dislike. He raised the book, which we had read dozens of times, above his head.

In that moment I thought about another force in the world, the force behind the very idea of a Boy-Boy: I thought about gender. My son leaned forward, arms raised and extended. A smile strained his cheeks, seven tiny teeth bared. His whole body twisted into an absurd, miniature shrine to the ultimate Boy-Boy book, which he loves with an intensity that surprises me, though I bought it for him. How little control I have over what gender information he learns! Male adults he has barely met teach him “High five!” and call him “Buddy.” His favorite YouTube videos feature John Lee Hooker wanly performing “Boom, Boom, Boom,” and Ray Charles teaching The Blues Brothers to “Shake a Tail Feather.” The two-year-old boy at the Houston Children’s Museum shows him that cars go “rrrrrr,” and by that evening, my son imitates him precisely. At a playground’s toy house, a trio of four-year-old girls cold shoulders him; at daycare, where his favorite place to play is the toy kitchen, his three tias teach him that la cocina is for boys, too. This thrills his mother and me, even if we still haven’t been able to stop calling him, affectionately, “Mister, Mister.” That night, my son held Trains Go over his head and I thought, Gender is weather: it’s a force you can’t do much to change, the changes of which are are over time unpredictable and not entirely benign.

The horizontal outline of Trains Go settled in my hands, and the contours of its worn cardboard pages dragged me out of abstraction. “Junebug, we read that already,” I said. He grunted, then opened it in his own lap. “Wooo woooo wooooooo,” he said, reading words not on that page, but the next one. I shook my head, astonished. The next storytime, he opened The House in the Night, flapping his lips and saying “bah-bah, baah-baaah,” as he must think I do. He learns, as toddlers do, through mimicry. On the shelf above Harold and the Purple Crayon and the one below What to Expect: The Second Year rests my neglected copy of Poetics, in which Aristotle says: “Imitation is natural to man from childhood, one of his advantages over the lower animals being this, that he is the most imitative creature in the world, and learns at first by imitation.” Reading this, after he went to bed that night, I wondered even more intensely, what does he learn about gender from me? Does he learn the next claim in Poetics, that “it is also natural for all to delight in works of imitation.” All feels impossible, because I know I cannot delight in the countless imitations of violence and chauvinism and privilege I witness in too many fathers and sons and brothers and husbands. So if I can’t delight, can he? Of course he can. I start to worry that I should be more vigilant in my observations. What exactly does he imitate? What does he ignore?

2.

I am probably more typically masculine now, as a father and husband, than I have been since age seven, when I left behind construction trucks and dinosaurs for a Cabbage Patch doll in a Boston Red Sox uniform. I named the doll Ellis Burks, after the actual center fielder, and I thought I treated him with the tenderness any of the Girl-Girls in the playhouse. I referred to him formally, using only his complete name. Ellis Burks, drink your milk. Ellis Burks, go to sleep. Ellis Burks, why are you crying? Ellis Burks, let’s play catch.

Later in elementary school, after I memorized the presidents in order using flashcards my father had bought me, my cousins and I filled summers with games of “White House” on piles of seaside driftwood. “White House” cribbed its structure from your typical “House” played by aspiring princesses everywhere. I adapted it, persuading my younger siblings and cousins to assume the identities of actual figures: Aaron Burr, my shifty vice president, James Madison, my trusted secretary of state. My cousin Kate, of course, was Mrs. Jefferson. As a parent, my younger self’s lack of imagination for the female roles troubles me. Nerd that I was, fidelity to fact did not keep me from asking Kate’s older sister to play Queen Victoria (not yet crowned) or Catherine the Great (dead). No: a hole in my own concept of the world, a lack of schema for powerful women in that world, is what kept my sister from playing Sacajawea, reporting back with Lewis and Clark, her twin boy cousins. I hadn’t learned to expect to see women in the White House as anything other than first ladies, despite having read through countless books providing stories of men to populate a constructed world. I’d read these books, of course, at the place I had taken my son on that freezing Saturday -- my local library.

Leaving the library, I pushed my son north on St. Nicholas Avenue. My voice described what we passed -- "There’s a streetlamp, and a WALK signal. This street is 1-7-9, which is one more than 1-7-8 and one less than 1-8-0. Look there’s a yellow light, it means slow down. That car has its hazards on, those lights are for parking illegally." My mind sorted through the way each incident in his young life left a trace of information, a tiny dot. Even in its flawed form, “White House” was only possible because I’d connected dots between texts. A few days before he read Trains Go to me, I had noticed my son apparently doing the same thing. Sorting his library before bedtime, he double-fisted Sandra Boynton books, opening The Going to Bed Book, then Opposites. Like most Boynton books, these two shared an ensemble of cartoonish animals: a moose, a hippopotamus, a rabbit, a cat. He opened both books and compared them, turning a page in one, then looking at the other, turning a page in that one, then looking at the first book. This moment returned to me on the cold walk home, and I did a quick census. Only the hippopotamus had a clearly stated gender. This is a problem, I thought.

It could only be fixed by camel.

“Fixed by Camel,” was a phrase my father, a one-time carpenter, had used after reassembling my son’s crib in our new apartment. At Christmas, to clarify, he gave my son Jacquelyn Reinach’s Sweet Pickles book Fixed by Camel, a gift as much for me -- and for him -- as it was for my son. With the book in my hands, I immediately remembered Clever Camel and her brick-orange, long-sleeved coverall jumpsuit. How she outsmarts Kidding Kangaroo when he strands her on a roof, traps her under a manhole cover, and defaces the park benches she paints. How she captures him in a jungle gym snare, lured by an irresistible sign reading "DANGER DO NOT RING DOORBELL UNDER ANY CIRCUMSTANCES." How she also leaves a glass of milk and two peanut butter sandwiches, which she tells him he should eat while he waits for her sidewalk to dry. I always loved that Camel -- calm, solutions-oriented, quick-witted, and compassionate -- was female. The writer’s choice to explicitly gender her felt radical to me, risky, rare. It added depth and surprise, two qualities Aimee Bender calls universal pleasures in a close read of her infant twins’ favorite book, Good Night, Moon.

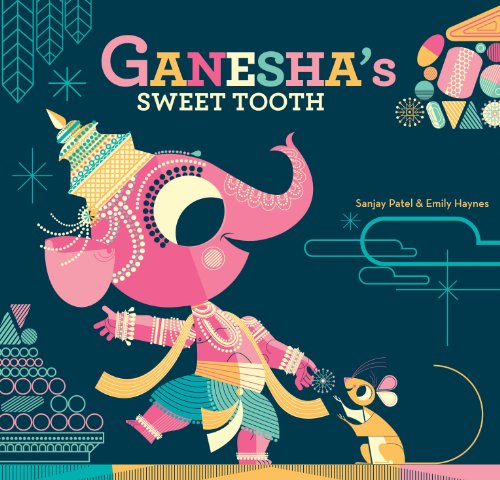

After our trip to the library, I sat down with my son and Fixed by Camel. As with A is for Activist and A Rule is to Break: The Child’s Guide to Anarchy, as with Ganesha’s Sweet Tooth and Press Here, the first read felt more for my pleasure than for his. Clever Camel might have been a revelation for me as a child, a fixed point from which I could explore the idea -- gender -- that I still don’t understand. But my guidepost can’t be my son’s. He’ll find his own, in time, and I can hope that he does that because I help him ask better questions, no matter what those questions, or their answers, might be. This thought chased my disappointment with his reaction to the book. There’s nothing broken, nothing to be fixed. Aristotle didn’t have the last word on imitation; the modernists and phenomenologists saw to that. “Of course, works of art imitate the objects they represent, but their end is certainly not to represent them,” Jacques Lacan once wrote. “In offering the imitation of an object, they make something different out of that object. Thus they only pretend to imitate.” Whether or not my son’s imitations of men and what they should love are pretend, they are his attempts to be in the world and to love it. Every time he surprises me with that sort of expression, I need to be grateful beyond words. Imposing my version of gender, my preference for skepticism and nonconformity, isn’t any more appropriate or healthy than forcing him into army fatigue onesies or calling him “Daddy’s Big Guy.” Yes, I hope to expose him to stated versions of all kinds of gender, not only your Gymboree binary, not only Boynton’s sexless bestiary. Yes, I plan to fight the tendency to make the default gender male. But most of all, I think of the gift my father gave to his son when he gave Fixed by Camel to mine. The book transmitted of a spark, bequeathing to my son and I both an encounter with surprise and depth. It’s that electricity I want to conduct. My father’s easy transfer of precise kindness is something I may well spend my life learning to imitate.

Image Credit: Pixabay.

It’s All in Your Head: The Problems With Jonah Lehrer’s Imagine

1.

Not too long ago, the idea that “you are your brain” was the revolutionary mantra of a handful of scientists, but today it raises hardly an eyebrow among the general public. The brain has become, for many, synonymous with the biological machinations of the self, and the self-knowledge promised by neuroscience has ignited a hunger to understand how it weighs in on age-old questions: Do we have free will? How do we make decisions? What happens when we fall in love? Why do we make art? Imagine, Jonah Lehrer’s polymathic new book is poised to feed this hunger. Blurring the lines between science writing, self-help, and cultural criticism with virtuosic ease, Imagine explores fields as disparate as neuroscience, sociology, and urban planning with the promise not only to explain how creativity works, but how you, too, can use these secrets to unlock your own creativity, and how we can collectively build a more creative culture.

The book ranges across a dizzying array of examples of the creative process, from Bob Dylan to the team at Pixar to the tech boom in Tel Aviv, creating a mash-up of anecdotes, science reporting and associative interjections from the humanities. In the second chapter alone, we get the guy who invented Scotch Tape; a psychologist who uses EEG to study the brain while people solve puzzles; a neuroscientist who studies insight; a passage from David Hume; a neurologist who is studying daydreaming; the invention of Post-Its; the classic children’s book Harold and the Purple Crayon...and the list goes on. To say that the density and diversity of sources marshaled here are impressive would be a massive understatement. As in Proust Was a Neuroscientist and How We Decide, Lehrer has invited an eclectic mix of guests to his dinner party, and getting them all in the same room to see what happens is a rare achievement. But his real talent lies in the way he plays all these sources off each other in order to build a coherent argument, leaping from the story of how Barbie dolls were born when an American housewife saw a pornographic doll in the window of a German cigar shop to how seeing ones’ work with fresh eyes is “one of the central challenges of writing” to the neural pathways involved in reading and writing in order to demonstrate that “the only way to be creative over time -- to not be undone by our expertise -- is to experiment with ignorance, to stare at things we don’t fully understand.” To cap off this particular moment, Lehrer offers a toast to the poet Samuel Coleridge, who said he attended public chemistry lectures in London to “renew my stock of metaphors.”

Imagine uses the same mash-up method that was so successful in How We Decide, but the science of creativity simply isn’t as developed as the science of decision-making. Because of this, it turns out that Lehrer’s tried-and-true method doesn’t work quite as well. The difficulty with pinning down creativity -- scientifically or otherwise -- becomes obvious when you consider the diversity of anecdotal examples in the book. Is writing a song comparable to coming up with new uses for glue or solving a puzzle that has only one correct answer? Is the person who writes twenty cookie-cutter novels engaged in the same activity as the person who writes one book so unprecedented that it changes the trajectory of literature? Are any two creative processes really the same? At most, it seems that one could point out patterns, but Lehrer boldly sets his sights on formula.

Imagine argues that “creativity is a catchall term for a variety of distinct thought processes” and that by understanding these processes we can all learn to be more creative. The more people you talk with, and the more diverse those people are, the better. Companies that wish to encourage creativity should have everyone use a bathroom in a centralized place, like Pixar does. If we want to be a more creative society, we should lighten up on copyright laws and share ideas, like they do in Silicon Valley and Tel Aviv. The scope widens until, by the end, Lehrer is advocating policy changes in areas such as education, copyright law, and immigration. He argues, for example, that because immigrants submit a disproportionate number of patent applications in the U.S., it seems that, as measured by the metric of patents, at least, more immigrants could make America a more creative country.

Trumpeted as “something of a popular science prodigy” by The New York Times, Lehrer has become a translator and ambassador, someone readers trust to explain what is going on in all those ivory towers full of beakers and cell cultures and genetically-engineered mice. Besides his two hugely successful books, he is a contributing editor at Wired, a frequent guest on WNYC’s RadioLab, a regular contributor to The New Yorker, and a science columnist for The Wall Street Journal. For many readers he is the face of science in popular culture. And for good reason. He has repeatedly proven his skill at wrestling complex scientific ideas into nuanced and accurate discussions accessible to non-scientists. Take, for example, his excellent Wall Street Journal column in which he writes insightfully about the limitations of fMRI, a widely used brain-imaging technology with difficult-to-interpret data that ignites heated disputes both inside and outside scientific circles. Lehrer is also an expert and captivating storyteller, and Imagine aims high in grappling with the extremely difficult task of communicating subtle and complex ideas in an engaging way.

But Lehrer’s role as liaison comes with a degree of responsibility; most readers trust that he is explaining science accurately and drawing reasonable conclusions based on the data at hand. Lehrer’s polished style, affable enthusiasm, and obvious intelligence make it tempting not to question the science as he sees it. All the more troubling, then, that right from the outset of Imagine there are signs that science may be taking a backseat to story:

Most cognitive skills have elaborate biological histories, so their evolution can be traced over time. But not creativity -- the human imagination has no clear precursors…The birth of creativity, in other words, arrived like any insight: out of nowhere.

If there are any truths in biology, one is that nothing arrives “out of nowhere.” For almost the whole recorded history of science, people believed that we may be the exception. For years, scientists thought we were different because we use tools. Not so, as it turned out. Chimpanzees have us there. And gorillas and orangutans and some other primates. And birds. And elephants. And a few bottlenose dolphins. Even ants use grain to carry honey. Until very recently, many scientists thought language set us apart, but in the past ten years, researchers have observed precursors to human speech in primate vocalizations and striking similarities between how infants learn to speak and songbirds learn to sing. Even self-awareness, a treasured feature of human consciousness, is no longer considered unique to humans. It’s tempting to think that we are special, but today most researchers agree with Darwin’s eloquent observation that humans are animals, too; we are different in degree rather than kind. There’s no reason to think that creativity will be the exception.

The real problem is that claiming creativity’s exceptional status makes for a better story: if creativity is what sets us apart from the animals, understanding this faculty is tantamount to unlocking the mystery of who and what we are. As Lehrer writes, “Until we understand the set of mental events that give rise to new thoughts, we will never understand what makes us so special.” This claim raises the stakes for the book. The problem is, it’s probably just not true.

2.

These few sentences set off some unexpected alarm bells, so we decided to take a closer look at some of the science upon which Imagine is built, specifically neuroscience, as that’s what Lehrer is best known for and where his greatest expertise lies. In the fourth chapter, for example, Lehrer assembles an impressive array of anecdotes and neuroscience results to explain why “letting go” is “an extremely valuable source of creativity.” “The act of letting go,” he declares, “has inspired some of the most famous works of modern culture, from John Coltrane’s saxophone solos to Jackson Pollock’s drip paintings.” So how does letting go, Lehrer asks, lead to creativity? “The story begins in the brain,” he claims, and turns to a neuroimaging experiment in which jazz pianists were asked to improvise new tunes while in a brain scanner. During improvisation, the scanner picked up a surge of activity in a brain area previously linked to self-expression. At the same time, the scientists also observed a sharp decrease in brain activity in an area previously linked to impulse control. Lehrer concludes, “This suggests that the musician was engaged in a kind of storytelling, searching for the notes that reflected her personal style...The musicians were inhibiting their inhibitions, slipping off those mental handcuffs.” At first pass, this interpretation sounds pretty convincing: the self-control center of the brain shuts down to clear the path for unfettered self-expression.

Except that it’s impossible to draw that conclusion from the data at hand. This is an example of a common logical fallacy that plagues the interpretation of neuroimaging data. Say you notice a crowd of people at your neighbor’s house one night, and then find out she is throwing a party. You can correctly conclude that whenever your neighbor throws a party, there will be people at her house. On another night, you again notice a crowd of people at her house, and you conclude she is throwing a party -- but this time you’re wrong. She is hosting a church group. While you can conclude that a party means there will be people, you cannot conclude that people means a party.

This reasoning fails because brain regions, like houses, have many functions. If you scan the brains of 100 people while they add 2+2, and in every case the same little patch of cortex jumps into action, it’s safe to infer that the cognitive act of adding 2+2 is related to activity in that brain region. So far so good. (What the region might actually be doing -- adding, focusing on the number 2, catching errors -- is whole separate problem). It’s tempting to say, then, that every time researchers observe that little patch of cortex lighting up, it must mean that the person in the scanner is engaged in adding 2+2. After all, it’s the 2+2 part of the brain, right? That’s where intuition can lead you astray. There is not a measurable one-to-one mapping between any brain region and any particular cognitive process; the same little patch of cortex is likely involved in multiple functions, just as a house can be filled with people for many different reasons. So when you see the patch of cortex light up under the scanner, you can’t say the person is adding 2+2. Likewise, if a brain region previously linked to “self-expression” lights up while improvising music, you can’t say -- as Lehrer does -- that the musician was “engaged in a kind of storytelling.”

This claim is all the more surprising because Lehrer is clearly familiar with this logical fallacy. In the Wall Street Journal column about fMRI data mentioned earlier, he offers an elegant discussion of this very problem:

Consider an op-ed piece recently published in the New York Times, which used fMRI results to demonstrate, purportedly, that people “literally love their iPhones.” The evidence? When the researchers showed subjects a video of a ringing cellphone, a part of the brain called the insula exhibited a spike in activity. Because previous studies have linked the insula with feelings of love, the authors concluded that the gadget had become a “romantic rival” for husbands and wives.

But here’s the problem: The insula is also activated by feelings of disgust and bodily pain. It plays an important role in coordinating hand movement, maintaining balance and monitoring bodily changes. In fact, activity in the insula has been implicated in nearly a third of all fMRI papers. Because the brain is such a vast knot of connections, it’s often impossible to understand what's happening based on local patterns of activity. Perhaps we’re disgusted by our iPhones, or maybe the insula is just preparing the fingers to move. The pretty picture can’t reveal the answer.

So what’s going on? It’s baffling, really, that in Imagine Lehrer makes statements so similar to ones he thoroughly discredits in his column.

And the problems continue to arise. Near the end of the same chapter, Lehrer presents what appears to be the most convincing piece of evidence yet that inhibiting self-control enhances creativity. He reports a study in which the researcher used a harmless technique called TMS to disrupt brain activity in regions previously implicated in impulse control while the subjects drew sketches of animals. Before TMS, Lehrer reports that their drawings were “crude stick figures.” But during TMS, they exhibited “strange, new talents.” Their figures were “suddenly filled with artistic flourishes.” The section concludes with the comforting bromide that we all have inner artists, if only the brain’s inhibitory mechanisms wouldn’t “constantly hold back our latent talents.”

We were curious to see these “before” and “after” drawings, so we looked up the study. Upon viewing the drawings we felt a bit misled by Lehrer’s claim that dampening activity in the brain area he connects to impulse control led to “strange, new talents.” These before and after drawings, for example, seem to be just slightly different versions of a horse:

Savant-like skills exposed in normal people by suppressing the left fronto-temporal lobe. Allan W. Snyder, Elaine Mulcahy, Janey L. Taylor, D. John Mitchell, Perminder Sachdev, and Simon C. Gandevia, Journal of Integrative Neuroscience, Vol 2, No. 2, 149-158, © 2003, World Scientific.

One might even argue that the saddle in the “before” drawing on the left represents an “artistic flourish” absent in the “after” drawings on the right. In the paper, even the researchers themselves did not claim to have observed any great shift in artistic performance. They concluded that the technique “did not lead to a systematic improvement in naturalistic drawing ability,” although the drawings did show a “change of scheme or convention.” These less-than-definitive results, coupled with the fact that the details of how TMS affects brain activity are poorly understood, renders any hypothesis about this brain area and “creativity” speculative. The researchers do argue for such a link elsewhere, and even if this unproven hypothesis turns out to be true, to say that this study supports the chapter’s claims that “the timid circuits of the prefrontal cortex keep us from risking self-expression” is still problematic. The book is representing speculation as fact. While isolated moments like these may or may not be indicative of a larger pattern, they do raise doubts about both how science is represented throughout the book and the way it is used to support Lehrer’s claims.

If dubious interpretations of scientific data appeared only once in Imagine, it might be a worrisome fluke; but they appear multiple times, which is cause for real concern. Lehrer steps over the line again when connecting amphetamine use to creativity. He states that “Because the dopamine neurons in the midbrain are excited...the world is suddenly saturated with intensely interesting ideas.” Such definitive statements imply that neuroscience has already charted a causal course from neurotransmitter chemistry to a complex cognitive process -- which simply isn’t true. That it should have come from a writer who so clearly has the ability to write about science critically and intelligently still comes as a bit of a surprise.

3.

All writers who translate neuroscience for the general public today work under a tremendous pressure to provide easy answers. And it’s not just writers who feel this pressure. So do scientists. It’s possible that Imagine is reflecting the sometimes unsavory habits of scientists who are worried about getting the sort of results that will ensure the millions of dollars in funding necessary to continue their research and move forward in their scientific careers. These habits often bleed over into the way scientists relate their work to journalists. The researcher who had subjects draw the “before” and “after” horses was quoted in The New York Times as calling TMS “a creativity-amplifying machine.” This sort of comment implies a causal link that has not yet been scientifically established, and it can tempt journalists into overstatement. Nevertheless, it is the job of the science writer to represent science as it is, to report on the often ambiguous reality of the scientific process -- not to suggest certainty where it does not exist, even if it may seem more appealing to readers.

Everyone is looking for answers. By understanding the brain, the thinking goes, we can better understand ourselves and therefore change -- our habits, diets, workplaces -- in order to be better, happier versions of ourselves. This promise fuels neuroscience’s great popular appeal. However, while today’s neuroscience offers a deeper understanding the brain than ever before, it is still incomplete. It is far from providing the answers, or advice, that readers might find most satisfying. In the introduction, Imagine promises to deliver “what creativity is...how creativity works” and how “we can make it work for us” by revealing different types of creativity at work in different regions of the brain. This promise defies the reality of current brain science: despite the incredible progress of the past century, scientists really know very little about how the organ works, and can only postulate how neural mechanisms might be related to mind and behavior. People are looking, too soon, to neuroscience for answers.

We need good translators of science to the general public, and Lehrer has the public’s ear and the public’s trust. He is at his best when putting his considerable talents to the task of telling a story that is true according to the facts as we know them, rather than telling a story people want to hear.

Following the Moon: Plot and the Novels of Tana French

In Harold and the Purple Crayon, that beloved children's book by Crockett Johnson, the moon that Harold draws at the beginning of the story is what allows him to return to his bedroom at the end of the story. In fact, once Harold draws his purple moon, it appears on every page. It has to--it's lighting his way. Not that a reader, young or old, would necessarily notice its ubiquity on first read. It's not until afterward, or on subsequent readings, that Johnson's superb and simple plotting reveals itself. The moon was there, all along, waiting for the climax. Its purpose in the story is, as Aristotle put it, surprising and inevitable.

I was reading (and re-reading) Harold and the Purple Crayon soon after I'd discovered the work of Tana French, the Irish crime writer of prodigious talents who has published a trio of novels about detectives in Dublin. French got me thinking a lot about plot precisely because she writes mysteries, a genre that requires the most tightly-constructed stories: the moon must be gracefully and subtly placed, or you risk losing your reader. I write this with confidence, even though I've read very little crime fiction in my life. I'm the kind of reader who devours episode after episode of Law and Order: SVU and then repairs to the bath (the bawth) to read a novel free of blood, murder, and so on.

I was excited to tear into French's first novel, In the Woods. I imagined myself staying up all night, rushing to the story's end. I figured that homicide Detectives Rob Ryan and Cassie Maddox, investigating the murder of a young girl, would be literary versions of Detectives Elliot Stabler and Olivia Benson. I moronically told anyone who would listen what I was planning to read. "A whodunit!" I cried. "A police procedural!" I wanted blood, and detectives with latex gloves.

What I got was a few lessons on plot.

If a scene is the completion of an action in a specific time and place, then plot is...what, exactly? I'd venture to say that it's the relationship between these scenes. It's the irresistible pull--and meaningful accumulation of--cause and effect. ("The king died and then the queen died of grief," as E.M. Forster famously put it.) It's the moon planted at the beginning of the story, paying off at the end.

But there's more. Beyond the world of storytelling, plot is defined as a secret scheme to reach a specific end. Or it's a parcel of land. Or it means to mark a graph, chart, or map: the plotting shows us what has changed; our ship is headed this way. To a writer (me) interested in (obsessed with?) plot-making, all of these are significant definitions. The lessons abound. I once read somewhere that Margaret Atwood compared novel writing to performing burlesque: don't take off your clothes too slowly, she advised, or the reader will get bored; get naked too fast, and the entertainment ends before it can really begin. I put that in my plot-pocket, too.

So how did French's books help and influence my thoughts on plot? Here it goes:

1. Call me ignorant, but I was surprised that In the Woods didn't move as swiftly as my favorite hour-long network cop dramas. There was air around the clue-finding, and the mystery didn't unravel as cleanly as I expected. It might have if the story's protagonist, Detective Ryan, weren't so damaged, haunted as he is by a second (and unsolved) crime that happened when he was a boy. The thing is, were Detective Ryan not haunted, the story would lack not only emotional weight, but its narrative engine, too. Ryan's internal conflict feeds the external one. As with all good stories, character nurtures plot, emerges from it. The most dramatic element of the narrative is the relationship between Detectives Ryan and Maddox, and how the murder case they're investigating strengthens and then threatens that relationship. The scenes of them drinking wine in Maddox's attic flat, and the passages about their partnership and the shared understanding between them, feed the thrill of the crime-solving, even as they divert from it.

Lesson: Although the reader wants to find out what happens, longs to have the mystery revealed, the mysteries of existence, of human interaction, which aren't so easy to solve, are often the most pleasurable to experience on the page. A writer need not move inexorably toward the finish line. The asides, the exhales, are allowed. They are required.

2. I often hear people say that with genre fiction (and addictive young adult fiction), plot trumps prose. The writing needs to be invisible, they say, so that story can take center stage. But with French's work that isn't the case. Her prose is sharp and beautiful, and it draws attention to itself. French isn't a sentence acrobat like Sam Lipsyte, but her prose is certainly visible. In The Likeness, French's second novel, narrated by Detective Cassie Maddox, we get fun phrases like, "I hate nostalgia, it's laziness with prettier accessories," and "The lights of the house spun blurred and magic as the lights of a carousel." This kind of writing calls to mind what John Gardner dubbed the "foreplay paragraph," one that makes you want to read faster, to find out what happens, but which nevertheless keeps you anchored to it because the sentences are so well-constructed, so...sexy. It's the writing that makes you not skip ahead: to the dead body, the nudity, the climax.

Now, I admit, The Likeness, my favorite of French's novels, has a pretty unbelievable premise: a dead woman is discovered who looks just like Cassie...and this corpse also happens to be carrying identification that claims she's Lexie Madison, Cassie's former undercover alias. From there, Cassie infiltrates the victim's tight-knit group of friends, posing as Lexie (the survived version). It's a Gothic The Secret History, with more secrets and more police.

The absurd doppelganger premise is saved, I think, by Cassie's voice. That is, by the prose. Who cares if what brings Cassie back to her undercover identity is a touch far-fetched if the descriptions are so right on? What French really wants us to focus on is the delicious and dangerous pull Cassie feels toward this isolated group of friends in their big, crumbling house. And our narrator describes the seduction of belonging so, so well.

Lesson: What Gary Lutz calls "page-hugging" prose isn't necessarily anathema to plot. The descriptions in The Likeness may force the reader to slow down to savor the imagery and the sense of place (that plot of land), but they also serve to emphasize Cassie's growing attachment to the crime's possible suspects. As with In the Woods, what threatens the investigation magnifies the relationship between its players and its deeper meanings, and it makes solving the investigation that much more fun for the reader. Beautiful prose begets a beautiful plot.

3. By the time I got to French's third novel, Faithful Place, I was able to figure out who the killer was fairly quickly. I'm not sure if that's because I'd gotten more adept at reading crime novels, or if French made it easy. The thing is, it didn't matter; I was still hooked to the story.

At the beginning of Faithful Place, undercover cop Frank Mackey is drawn back to the working class neighborhood he left at age nineteen, vowing never to return. He'd planned to go to England with his girlfriend, Rosie Daly, but she took off without him--or so he assumed. 22 years later, when Rosie's packed suitcase is discovered in an abandoned house on his old street, Frank must not only reckon with what really happened to his first love, he must also face the dysfunctional family he's tried so hard to leave behind. Juicy, right? But what happened to Rosie becomes secondary to Frank's conflicts with his family, to his (impossible?) desire to escape his past and class.

What I love about French's work is how she refuses to answer every question the story raises; in fact, sometimes the ones she does answer feel a little too easy, as if borrowed from a lesser, more simplistic narrative (see the less-than-stellar conclusion of In the Woods). She is better at vague, I think, more comfortable with loose ends. As Laura Miller points out in Salon:

French herself doesn't play by the rules, and the prime rule of crime fiction, no matter how grisly, cynical or edgy, is that the plot begins with a disruption of order (the crime itself) and ends with the restoration of it, albeit in some slightly battered form. The guilty parties are identified and usually punished, secrets are unearthed and, above all, the world returns to intelligibility, however bitter the message it has to tell.

The crime is solved in The Faithful Place, but it isn't until after the killer is revealed that the book's grace becomes apparent. With the crime figured out, Frank and the reader must wrestle with bigger questions, discomforts and difficulties. There's a darkness to the ending that's deeply moving.

Lesson: A scene should raise multiple questions, but the scene that follows isn't required to answer everything. Some questions can be carried from scene to scene, through an entire book, teasing the reader, or they can be posed in the final pages. The burlesque dancer might want to leave her brassiere on, and it can still be a damn fine show. Or: she can show you her tits, and you might be up all night thinking about her wrists, which had been covered all along.

4. In the Woods teaches us how to solve a murder, and, more importantly, how to work a case with a partner. (Or, maybe, how to botch that partnership.)

Detective Ryan says:

I wish I could tell you how an interrogation can have its own beauty, shining and cruel as that of a bullfight; how in defiance of the crudest topic or the most moronic suspect it keeps inviolate its own taut, honed grace, its own irresistible and blood-stirring rhythms; how the great pairs of detectives know each other's every thought as surely as lifelong ballet partners in a pas de deux...

The Likeness teaches us how to go undercover. As Cassie tells us:

"...bad stuff happens to undercovers. A few of them get killed. More lose friends, marriages, relationships. A couple turn feral, cross over to the other side so gradually that they never see it happening till it's too late, and end up with discreet, complicated early-retirement plans. Some, and never the ones you'd think, lose their nerve--no warning, they just wake up one morning and all at once it hits them what they're doing, and they freeze like tightrope walkers who've looked down...And some go the other way, the most lethal way of all: when the pressure gets to be too much, it's not their nerve that breaks, it's their fear. They lose the capacity to be afraid, even when they should be."

Faithful Place teaches how to lead your own private investigation, how to take your work home with you; Frank isn't supposed to be on the Rosie investigation, but he must figure out what happened. As with the other two books, there are also nuggets of professional wisdom throughout. For instance, we learn that an undercover cop learns to flick a switch in his mind so that "the whole scene unfolds at a distance on a pretty little screen, while you watch and plan your strategies and give the characters a nudge now and then, alert and absorbed and safe as a general."

What Faithful Place taught me best, though, is how to be working class Irish. What to eat and drink, how to say "Jaysus" instead of "Jesus," and what to call the new middle class neighbors: "epidural yuppies."

Lesson: Mysteries, and detective novels in particular, are how-to manuals in a sense. Part of their magnetism is that they teach readers how to be bad-ass cops: brave, sharp, maybe even crooked. But, really, there's an instructional aspect to every story. The reader is learning the world of the characters, and the rules therein, and it's pleasurable to be immersed in that day-to-day experience, in the expertise of others. The writer is teaching you how to live as someone else. She is also teaching you how to read her narrative. The writer guides your expectations. This is how plot works in this unique narrative.

You see, Tana French taught me that plot is a strange and amorphous aspect of craft, never a one-formula-fits-all kind of thing. (What in fiction is?) Sometimes the moon's on page two, and sometimes it isn't. Sometimes you're reading for the moon, and sometimes you're reading because you like the color purple, or Harold's little jumpsuit, or Harold himself. Will he make it home safe? What does his journey even mean, anyway?

Goodnight Stars, Goodnight Air: Reconnecting with Children’s Books as a Parent

The books that parents read to their very young children don’t change much from generation to generation. When my son was born two years ago I was surprised to find that with few exceptions, the titles we welcomed into our Philadelphia apartment were the same ones that three decades earlier had served as my own introduction to storytelling.

I made an informal study of the Amazon sales rankings of the books I enjoyed having read to me most as a kid. It seemed to confirm that taste in books for young children is remarkably constant. Here are just a handful of popular titles with their publication years and their overall Amazon ranks: