Mentioned in:

Most Anticipated: The Great Winter 2025 Preview

It's cold, it's grey, its bleak—but winter, at the very least, brings with it a glut of anticipation-inducing books. Here you’ll find nearly 100 titles that we’re excited to cozy up with this season. Some we’ve already read in galley form; others we’re simply eager to devour based on their authors, subjects, or blurbs. We'd love for you to find your next great read among them.

The Millions will be taking a hiatus for the next few months, but we hope to see you soon.

—Sophia Stewart, editor

January

The Legend of Kumai by Shirato Sanpei, tr. Richard Rubinger (Drawn & Quarterly)

The epic 10-volume series, a touchstone of longform storytelling in manga now published in English for the first time, follows outsider Kamui in 17th-century Japan as he fights his way up from peasantry to the prized role of ninja. —Michael J. Seidlinger

The Life of Herod the Great by Zora Neale Hurston (Amistad)

In the years before her death in 1960, Hurston was at work on what she envisioned as a continuation of her 1939 novel, Moses, Man of the Mountain. Incomplete, nearly lost to fire, and now published for the first time alongside scholarship from editor Deborah G. Plant, Hurston’s final manuscript reimagines Herod, villain of the New Testament Gospel accounts, as a magnanimous and beloved leader of First Century Judea. —Jonathan Frey

Mood Machine by Liz Pelly (Atria)

When you eagerly posted your Spotify Wrapped last year, did you notice how much of what you listened to tended to sound... the same? Have you seen all those links to Bandcamp pages your musician friends keep desperately posting in the hopes that maybe, just maybe, you might give them money for their art? If so, this book is for you. —John H. Maher

My Country, Africa by Andrée Blouin (Verso)

African revolutionary Blouin recounts a radical life steeped in activism in this vital autobiography, from her beginnings in a colonial orphanage to her essential role in the continent's midcentury struggles for decolonization. —Sophia M. Stewart

The First and Last King of Haiti by Marlene L. Daut (Knopf)

Donald Trump repeatedly directs extraordinary animus towards Haiti and Haitians. This biography of Henry Christophe—the man who played a pivotal role in the Haitian Revolution—might help Americans understand why. —Claire Kirch

The Bewitched Bourgeois by Dino Buzzati, tr. Lawrence Venuti (NYRB)

This is the second story collection, and fifth book, by the absurdist-leaning midcentury Italian writer—whose primary preoccupation was war novels that blend the brutal with the fantastical—to get the NYRB treatment. May it not be the last. —JHM

Y2K by Colette Shade (Dey Street)

The recent Y2K revival mostly makes me feel old, but Shade's essay collection deftly illuminates how we got here, connecting the era's social and political upheavals to today. —SMS

Darkmotherland by Samrat Upadhyay (Penguin)

In a vast dystopian reimagining of Nepal, Upadhyay braids narratives of resistance (political, personal) and identity (individual, societal) against a backdrop of natural disaster and state violence. The first book in nearly a decade from the Whiting Award–winning author of Arresting God in Kathmandu, this is Upadhyay’s most ambitious yet. —JF

Metamorphosis by Ross Jeffery (Truborn)

From the author of I Died Too, But They Haven’t Buried Me Yet, a woman leads a double life as she loses her grip on reality by choice, wearing a mask that reflects her inner demons, as she descends into a hell designed to reveal the innermost depths of her grief-stricken psyche. —MJS

The Containment by Michelle Adams (FSG)

Legal scholar Adams charts the failure of desegregation in the American North through the story of the struggle to integrate suburban schools in Detroit, which remained almost completely segregated nearly two decades after Brown v. Board. —SMS

Death of the Author by Nnedi Okorafor (Morrow)

African Futurist Okorafor’s book-within-a-book offers interchangeable cover images, one for the story of a disabled, Black visionary in a near-present day and the other for the lead character’s speculative posthuman novel, Rusted Robots. Okorafor deftly keeps the alternating chapters and timelines in conversation with one another. —Nathalie op de Beeck

Open Socrates by Agnes Callard (Norton)

Practically everything Agnes Callard says or writes ushers in a capital-D Discourse. (Remember that profile?) If she can do the same with a study of the philosophical world’s original gadfly, culture will be better off for it. —JHM

Aflame by Pico Iyer (Riverhead)

Presumably he finds time to eat and sleep in there somewhere, but it certainly appears as if Iyer does nothing but travel and write. His latest, following 2023’s The Half Known Life, makes a case for the sublimity, and necessity, of silent reflection. —JHM

The In-Between Bookstore by Edward Underhill (Avon)

A local bookstore becomes a literal portal to the past for a trans man who returns to his hometown in search of a fresh start in Underhill's tender debut. —SMS

Good Girl by Aria Aber (Hogarth)

Aber, an accomplished poet, turns to prose with a debut novel set in the electric excess of Berlin’s bohemian nightlife scene, where a young German-born Afghan woman finds herself enthralled by an expat American novelist as her country—and, soon, her community—is enflamed by xenophobia. —JHM

The Orange Eats Creeps by Grace Krilanovich (Two Dollar Radio)

Krilanovich’s 2010 cult classic, about a runaway teen with drug-fueled ESP who searches for her missing sister across surreal highways while being chased by a killer named Dactyl, gets a much-deserved reissue. —MJS

Mona Acts Out by Mischa Berlinski (Liveright)

In the latest novel from the National Book Award finalist, a 50-something actress reevaluates her life and career when #MeToo allegations roil the off-off-Broadway Shakespearean company that has cast her in the role of Cleopatra. —SMS

Something Rotten by Andrew Lipstein (FSG)

A burnt-out couple leave New York City for what they hope will be a blissful summer in Denmark when their vacation derails after a close friend is diagnosed with a rare illness and their marriage is tested by toxic influences. —MJS

The Sun Won't Come Out Tomorrow by Kristen Martin (Bold Type)

Martin's debut is a cultural history of orphanhood in America, from the 1800s to today, interweaving personal narrative and archival research to upend the traditional "orphan narrative," from Oliver Twist to Annie. —SMS

We Do Not Part by Han Kang, tr. E. Yaewon and Paige Aniyah Morris (Hogarth)

Kang’s Nobel win last year surprised many, but the consistency of her talent certainly shouldn't now. The latest from the author of The Vegetarian—the haunting tale of a Korean woman who sets off to save her injured friend’s pet at her home in Jeju Island during a deadly snowstorm—will likely once again confront the horrors of history with clear eyes and clarion prose. —JHM

We Are Dreams in the Eternal Machine by Deni Ellis Béchard (Milkweed)

As the conversation around emerging technology skews increasingly to apocalyptic and utopian extremes, Béchard’s latest novel adopts the heterodox-to-everyone approach of embracing complexity. Here, a cadre of characters is isolated by a rogue but benevolent AI into controlled environments engineered to achieve their individual flourishing. The AI may have taken over, but it only wants to best for us. —JF

The Harder I Fight the More I Love You by Neko Case (Grand Central)

Singer-songwriter Case, a country- and folk-inflected indie rocker and sometime vocalist for the New Pornographers, takes her memoir’s title from her 2013 solo album. Followers of PNW music scene chronicles like Kathleen Hanna’s Rebel Girl and drummer Steve Moriarty’s Mia Zapata and the Gits will consider Case’s backstory a must-read. —NodB

The Loves of My Life by Edmund White (Bloomsbury)

The 85-year-old White recounts six decades of love and sex in this candid and erotic memoir, crafting a landmark work of queer history in the process. Seminal indeed. —SMS

Blob by Maggie Su (Harper)

In Su’s hilarious debut, Vi Liu is a college dropout working a job she hates, nothing really working out in her life, when she stumbles across a sentient blob that she begins to transform as her ideal, perfect man that just might resemble actor Ryan Gosling. —MJS

Sinkhole and Other Inexplicable Voids by Leyna Krow (Penguin)

Krow’s debut novel, Fire Season, traced the combustible destinies of three Northwest tricksters in the aftermath of an 1889 wildfire. In her second collection of short fiction, Krow amplifies surreal elements as she tells stories of ordinary lives. Her characters grapple with deadly viruses, climate change, and disasters of the Anthropocene’s wilderness. —NodB

Black in Blues by Imani Perry (Ecco)

The National Book Award winner—and one of today's most important thinkers—returns with a masterful meditation on the color blue and its role in Black history and culture. —SMS

Too Soon by Betty Shamieh (Avid)

The timely debut novel by Shamieh, a playwright, follows three generations of Palestinian American women as they navigate war, migration, motherhood, and creative ambition. —SMS

How to Talk About Love by Plato, tr. Armand D'Angour (Princeton UP)

With modern romance on its last legs, D'Angour revisits Plato's Symposium, mining the philosopher's masterwork for timeless, indispensable insights into love, sex, and attraction. —SMS

At Dark, I Become Loathsome by Eric LaRocca (Blackstone)

After Ashley Lutin’s wife dies, he takes the grieving process in a peculiar way, posting online, “If you're reading this, you've likely thought that the world would be a better place without you,” and proceeds to offer a strange ritual for those that respond to the line, equally grieving and lost, in need of transcendence. —MJS

February

No One Knows by Osamu Dazai, tr. Ralph McCarthy (New Directions)

A selection of stories translated in English for the first time, from across Dazai’s career, demonstrates his penchant for exploring conformity and society’s often impossible expectations of its members. —MJS

Mutual Interest by Olivia Wolfgang-Smith (Bloomsbury)

This queer love story set in post–Gilded Age New York, from the author of Glassworks (and one of my favorite Millions essays to date), explores on sex, power, and capitalism through the lives of three queer misfits. —SMS

Pure, Innocent Fun by Ira Madison III (Random House)

This podcaster and pop culture critic spoke to indie booksellers at a fall trade show I attended, regaling us with key cultural moments in the 1990s that shaped his youth in Milwaukee and being Black and gay. If the book is as clever and witty as Madison is, it's going to be a winner. —CK

Gliff by Ali Smith (Pantheon)

The Scottish author has been on the scene since 1997 but is best known today for a seasonal quartet from the late twenty-teens that began in 2016 with Autumn and ended in 2020 with Summer. Here, she takes the genre turn, setting two children and a horse loose in an authoritarian near future. —JHM

Land of Mirrors by Maria Medem, tr. Aleshia Jensen and Daniela Ortiz (D&Q)

This hypnotic graphic novel from one of Spain's most celebrated illustrators follows Antonia, the sole inhabitant of a deserted town, on a color-drenched quest to preserve the dying flower that gives her purpose. —SMS

Bibliophobia by Sarah Chihaya (Random House)

As odes to the "lifesaving power of books" proliferate amid growing literary censorship, Chihaya—a brilliant critic and writer—complicates this platitude in her revelatory memoir about living through books and the power of reading to, in the words of blurber Namwali Serpell, "wreck and redeem our lives." —SMS

Reading the Waves by Lidia Yuknavitch (Riverhead)

Yuknavitch continues the personal story she began in her 2011 memoir, The Chronology of Water. More than a decade after that book, and nearly undone by a history of trauma and the death of her daughter, Yuknavitch revisits the solace she finds in swimming (she was once an Olympic hopeful) and in her literary community. —NodB

The Dissenters by Youssef Rakha (Graywolf)

A son reevaluates the life of his Egyptian mother after her death in Rakha's novel. Recounting her sprawling life story—from her youth in 1960s Cairo to her experience of the 2011 Tahrir Square protests—a vivid portrait of faith, feminism, and contemporary Egypt emerges. —SMS

Tetra Nova by Sophia Terazawa (Deep Vellum)

Deep Vellum has a particularly keen eye for fiction in translation that borders on the unclassifiable. This debut from a poet the press has published twice, billed as the story of “an obscure Roman goddess who re-imagines herself as an assassin coming to terms with an emerging performance artist identity in the late-20th century,” seems right up that alley. —JHM

David Lynch's American Dreamscape by Mike Miley (Bloomsbury)

Miley puts David Lynch's films in conversation with literature and music, forging thrilling and unexpected connections—between Eraserhead and "The Yellow Wallpaper," Inland Empire and "mixtape aesthetics," Lynch and the work of Cormac McCarthy. Lynch devotees should run, not walk. —SMS

There's No Turning Back by Alba de Céspedes, tr. Ann Goldstein (Washington Square)

Goldstein is an indomitable translator. Without her, how would you read Ferrante? Here, she takes her pen to a work by the great Cuban-Italian writer de Céspedes, banned in the fascist Italy of the 1930s, that follows a group of female literature students living together in a Roman boarding house. —JHM

Beta Vulgaris by Margie Sarsfield (Norton)

Named for the humble beet plant and meaning, in a rough translation from the Latin, "vulgar second," Sarsfield’s surreal debut finds a seasonal harvest worker watching her boyfriend and other colleagues vanish amid “the menacing but enticing siren song of the beets.” —JHM

People From Oetimu by Felix Nesi, tr. Lara Norgaard (Archipelago)

The center of Nesi’s wide-ranging debut novel is a police station on the border between East and West Timor, where a group of men have gathered to watch the final of the 1998 World Cup while a political insurgency stirs without. Nesi, in English translation here for the first time, circles this moment broadly, reaching back to the various colonialist projects that have shaped Timor and the lives of his characters. —JF

Brother Brontë by Fernando A. Flores (MCD)

This surreal tale, set in a 2038 dystopian Texas is a celebration of resistance to authoritarianism, a mash-up of Olivia Butler, Ray Bradbury, and John Steinbeck. —CK

Alligator Tears by Edgar Gomez (Crown)

The High-Risk Homosexual author returns with a comic memoir-in-essays about fighting for survival in the Sunshine State, exploring his struggle with poverty through the lens of his queer, Latinx identity. —SMS

Theory & Practice by Michelle De Kretser (Catapult)

This lightly autofictional novel—De Krester's best yet, and one of my favorite books of this year—centers on a grad student's intellectual awakening, messy romantic entanglements, and fraught relationship with her mother as she minds the gap between studying feminist theory and living a feminist life. —SMS

The Lamb by Lucy Rose (Harper)

Rose’s cautionary and caustic folk tale is about a mother and daughter who live alone in the forest, quiet and tranquil except for the visitors the mother brings home, whom she calls “strays,” wining and dining them until they feast upon the bodies. —MJS

Disposable by Sarah Jones (Avid)

Jones, a senior writer for New York magazine, gives a voice to America's most vulnerable citizens, who were deeply and disproportionately harmed by the pandemic—a catastrophe that exposed the nation's disregard, if not outright contempt, for its underclass. —SMS

No Fault by Haley Mlotek (Viking)

Written in the aftermath of the author's divorce from the man she had been with for 12 years, this "Memoir of Romance and Divorce," per its subtitle, is a wise and distinctly modern accounting of the end of a marriage, and what it means on a personal, social, and literary level. —SMS

Enemy Feminisms by Sophie Lewis (Haymarket)

Lewis, one of the most interesting and provocative scholars working today, looks at certain malignant strains of feminism that have done more harm than good in her latest book. In the process, she probes the complexities of gender equality and offers an alternative vision of a feminist future. —SMS

Lion by Sonya Walger (NYRB)

Walger—an successful actor perhaps best known for her turn as Penny Widmore on Lost—debuts with a remarkably deft autobiographical novel (published by NYRB no less!) about her relationship with her complicated, charismatic Argentinian father. —SMS

The Voices of Adriana by Elvira Navarro, tr. Christina MacSweeney (Two Lines)

A Spanish writer and philosophy scholar grieves her mother and cares for her sick father in Navarro's innovative, metafictional novel. —SMS

Autotheories ed. Alex Brostoff and Vilashini Cooppan (MIT)

Theory wonks will love this rigorous and surprisingly playful survey of the genre of autotheory—which straddles autobiography and critical theory—with contributions from Judith Butler, Jamieson Webster, and more.

Fagin the Thief by Allison Epstein (Doubleday)

I enjoy retellings of classic novels by writers who turn the spotlight on interesting minor characters. This is an excursion into the world of Charles Dickens, told from the perspective iconic thief from Oliver Twist. —CK

Crush by Ada Calhoun (Viking)

Calhoun—the masterful memoirist behind the excellent Also A Poet—makes her first foray into fiction with a debut novel about marriage, sex, heartbreak, all-consuming desire. —SMS

Show Don't Tell by Curtis Sittenfeld (Random House)

Sittenfeld's observations in her writing are always clever, and this second collection of short fiction includes a tale about the main character in Prep, who visits her boarding school decades later for an alumni reunion. —CK

Right-Wing Woman by Andrea Dworkin (Picador)

One in a trio of Dworkin titles being reissued by Picador, this 1983 meditation on women and American conservatism strikes a troublingly resonant chord in the shadow of the recent election, which saw 45% of women vote for Trump. —SMS

The Talent by Daniel D'Addario (Scout)

If your favorite season is awards, the debut novel from D'Addario, chief correspondent at Variety, weaves an awards-season yarn centering on five stars competing for the Best Actress statue at the Oscars. If you know who Paloma Diamond is, you'll love this. —SMS

Death Takes Me by Cristina Rivera Garza, tr. Sarah Booker and Robin Myers (Hogarth)

The Pulitzer winner’s latest is about an eponymously named professor who discovers the body of a mutilated man with a bizarre poem left with the body, becoming entwined in the subsequent investigation as more bodies are found. —MJS

The Strange Case of Jane O. by Karen Thompson Walker (Random House)

Jane goes missing after a sudden a debilitating and dreadful wave of symptoms that include hallucinations, amnesia, and premonitions, calling into question the foundations of her life and reality, motherhood and buried trauma. —MJS

Song So Wild and Blue by Paul Lisicky (HarperOne)

If it weren’t Joni Mitchell’s world with all of us just living in it, one might be tempted to say the octagenarian master songstress is having a moment: this memoir of falling for the blue beauty of Mitchell’s work follows two other inventive books about her life and legacy: Ann Powers's Traveling and Henry Alford's I Dream of Joni. —JHM

Mornings Without Mii by Mayumi Inaba, tr. Ginny Tapley (FSG)

A woman writer meditates on solitude, art, and independence alongside her beloved cat in Inaba's modern classic—a book so squarely up my alley I'm somehow embarrassed. —SMS

True Failure by Alex Higley (Coffee House)

When Ben loses his job, he decides to pretend to go to work while instead auditioning for Big Shot, a popular reality TV show that he believes might be a launchpad for his future successes. —MJS

March

Woodworking by Emily St. James (Crooked Reads)

Those of us who have been reading St. James since the A.V. Club days may be surprised to see this marvelous critic's first novel—in this case, about a trans high school teacher befriending one of her students, the only fellow trans woman she’s ever met—but all the more excited for it. —JHM

Optional Practical Training by Shubha Sunder (Graywolf)

Told as a series of conversations, Sunder’s debut novel follows its recently graduated Indian protagonist in 2006 Cambridge, Mass., as she sees out her student visa teaching in a private high school and contriving to find her way between worlds that cannot seem to comprehend her. Quietly subversive, this is an immigration narrative to undermine the various reductionist immigration narratives of our moment. —JF

Love, Queenie by Mayukh Sen (Norton)

Merle Oberon, one of Hollywood's first South Asian movie stars, gets her due in this engrossing biography, which masterfully explores Oberon's painful upbringing, complicated racial identity, and much more. —SMS

The Age of Choice by Sophia Rosenfeld (Princeton UP)

At a time when we are awash with options—indeed, drowning in them—Rosenfeld's analysis of how our modingn idea of "freedom" became bound up in the idea of personal choice feels especially timely, touching on everything from politics to romance. —SMS

Sucker Punch by Scaachi Koul (St. Martin's)

One of the internet's funniest writers follows up One Day We'll All Be Dead and None of This Will Matter with a sharp and candid collection of essays that sees her life go into a tailspin during the pandemic, forcing her to reevaluate her beliefs about love, marriage, and what's really worth fighting for. —SMS

The Mysterious Disappearance of the Marquise of Loria by José Donoso, tr. Megan McDowell (New Directions)

The ever-excellent McDowell translates yet another work by the influential Chilean author for New Directions, proving once again that Donoso had a knack for titles: this one follows up 2024’s behemoth The Obscene Bird of Night. —JHM

Remember This by Anthony Giardina (FSG)

On its face, it’s another book about a writer living in Brooklyn. A layer deeper, it’s a book about fathers and daughters, occupations and vocations, ethos and pathos, failure and success. —JHM

Ultramarine by Mariette Navarro (Deep Vellum)

In this metaphysical and lyrical tale, a captain known for sticking to protocol begins losing control not only of her crew and ship but also her own mind. —MJS

We Tell Ourselves Stories by Alissa Wilkinson (Liveright)

Amid a spate of new books about Joan Didion published since her death in 2021, this entry by Wilkinson (one of my favorite critics working today) stands out for its approach, which centers Hollywood—and its meaning-making apparatus—as an essential key to understanding Didion's life and work. —SMS

Seven Social Movements that Changed America by Linda Gordon (Norton)

This book—by a truly renowned historian—about the power that ordinary citizens can wield when they organize to make their community a better place for all could not come at a better time. —CK

Mothers and Other Fictional Characters by Nicole Graev Lipson (Chronicle Prism)

Lipson reconsiders the narratives of womanhood that constrain our lives and imaginations, mining the canon for alternative visions of desire, motherhood, and more—from Kate Chopin and Gwendolyn Brooks to Philip Roth and Shakespeare—to forge a new story for her life. —SMS

Goddess Complex by Sanjena Sathian (Penguin)

Doppelgängers have been done to death, but Sathian's examination of Millennial womanhood—part biting satire, part twisty thriller—breathes new life into the trope while probing the modern realities of procreation, pregnancy, and parenting. —SMS

Stag Dance by Torrey Peters (Random House)

The author of Detransition, Baby offers four tales for the price of one: a novel and three stories that promise to put gender in the crosshairs with as sharp a style and swagger as Peters’ beloved latest. The novel even has crossdressing lumberjacks. —JHM

On Breathing by Jamieson Webster (Catapult)

Webster, a practicing psychoanalyst and a brilliant writer to boot, explores that most basic human function—breathing—to address questions of care and interdependence in an age of catastrophe. —SMS

Unusual Fragments: Japanese Stories (Two Lines)

The stories of Unusual Fragments, including work by Yoshida Tomoko, Nobuko Takai, and other seldom translated writers from the same ranks as Abe and Dazai, comb through themes like alienation and loneliness, from a storm chaser entering the eye of a storm to a medical student observing a body as it is contorted into increasingly violent positions. —MJS

The Antidote by Karen Russell (Knopf)

Russell has quipped that this Dust Bowl story of uncanny happenings in Nebraska is the “drylandia” to her 2011 Florida novel, Swamplandia! In this suspenseful account, a woman working as a so-called prairie witch serves as a storage vault for her townspeople’s most troubled memories of migration and Indigenous genocide. With a murderer on the loose, a corrupt sheriff handling the investigation, and a Black New Deal photographer passing through to document Americana, the witch loses her memory and supernatural events parallel the area’s lethal dust storms. —NodB

On the Clock by Claire Baglin, tr. Jordan Stump (New Directions)

Baglin's bildungsroman, translated from the French, probes the indignities of poverty and service work from the vantage point of its 20-year-old narrator, who works at a fast-food joint and recalls memories of her working-class upbringing. —SMS

Motherdom by Alex Bollen (Verso)

Parenting is difficult enough without dealing with myths of what it means to be a good mother. I who often felt like a failure as a mother appreciate Bollen's focus on a more realistic approach to parenting. —CK

The Magic Books by Anne Lawrence-Mathers (Yale UP)

For that friend who wants to concoct the alchemical elixir of life, or the person who cannot quit Susanna Clark’s Jonathan Strange and Mr. Norrell, Lawrence-Mathers collects 20 illuminated medieval manuscripts devoted to magical enterprise. Her compendium includes European volumes on astronomy, magical training, and the imagined intersection between science and the supernatural. —NodB

Theft by Abdulrazak Gurnah (Riverhead)

The first novel by the Tanzanian-British Nobel laureate since his surprise win in 2021 is a story of class, seismic cultural change, and three young people in a small Tanzania town, caught up in both as their lives dramatically intertwine. —JHM

Twelve Stories by American Women, ed. Arielle Zibrak (Penguin Classics)

Zibrak, author of a delicious volume on guilty pleasures (and a great essay here at The Millions), curates a dozen short stories by women writers who have long been left out of American literary canon—most of them women of color—from Frances Ellen Watkins Harper to Zitkala-Ša. —SMS

I'll Love You Forever by Giaae Kwon (Holt)

K-pop’s sky-high place in the fandom landscape made a serious critical assessment inevitable. This one blends cultural criticism with memoir, using major artists and their careers as a lens through which to view the contemporary Korean sociocultural landscape writ large. —JHM

The Buffalo Hunter Hunter by Stephen Graham Jones (Saga)

Jones, the acclaimed author of The Only Good Indians and the Indian Lake Trilogy, offers a unique tale of historical horror, a revenge tale about a vampire descending upon the Blackfeet reservation and the manifold of carnage in their midst. —MJS

True Mistakes by Lena Moses-Schmitt (University of Arkansas Press)

Full disclosure: Lena is my friend. But part of why I wanted to be her friend in the first place is because she is a brilliant poet. Selected by Patricia Smith as a finalist for the Miller Williams Poetry Prize, and blurbed by the great Heather Christle and Elisa Gabbert, this debut collection seeks to turn "mistakes" into sites of possibility. —SMS

Perfection by Vicenzo Latronico, tr. Sophie Hughes (NYRB)

Anna and Tom are expats living in Berlin enjoying their freedom as digital nomads, cultivating their passion for capturing perfect images, but after both friends and time itself moves on, their own pocket of creative freedom turns boredom, their life trajectories cast in doubt. —MJS

Guatemalan Rhapsody by Jared Lemus (Ecco)

Jemus's debut story collection paint a composite portrait of the people who call Guatemala home—and those who have left it behind—with a cast of characters that includes a medicine man, a custodian at an underfunded college, wannabe tattoo artists, four orphaned brothers, and many more.

Pacific Circuit by Alexis Madrigal (MCD)

The Oakland, Calif.–based contributing writer for the Atlantic digs deep into the recent history of a city long under-appreciated and under-served that has undergone head-turning changes throughout the rise of Silicon Valley. —JHM

Barbara by Joni Murphy (Astra)

Described as "Oppenheimer by way of Lucia Berlin," Murphy's character study follows the titular starlet as she navigates the twinned convulsions of Hollywood and history in the Atomic Age.

Sister Sinner by Claire Hoffman (FSG)

This biography of the fascinating Aimee Semple McPherson, America's most famous evangelist, takes religion, fame, and power as its subjects alongside McPherson, whose life was suffused with mystery and scandal. —SMS

Trauma Plot by Jamie Hood (Pantheon)

In this bold and layered memoir, Hood confronts three decades of sexual violence and searches for truth among the wreckage. Kate Zambreno calls Trauma Plot the work of "an American Annie Ernaux." —SMS

Hey You Assholes by Kyle Seibel (Clash)

Seibel’s debut story collection ranges widely from the down-and-out to the downright bizarre as he examines with heart and empathy the strife and struggle of his characters. —MJS

James Baldwin by Magdalena J. Zaborowska (Yale UP)

Zaborowska examines Baldwin's unpublished papers and his material legacy (e.g. his home in France) to probe about the great writer's life and work, as well as the emergence of the "Black queer humanism" that Baldwin espoused. —CK

Stop Me If You've Heard This One by Kristen Arnett (Riverhead)

Arnett is always brilliant and this novel about the relationship between Cherry, a professional clown, and her magician mentor, "Margot the Magnificent," provides a fascinating glimpse of the unconventional lives of performance artists. —CK

Paradise Logic by Sophie Kemp (S&S)

The deal announcement describes the ever-punchy writer’s debut novel with an infinitely appealing appellation: “debauched picaresque.” If that’s not enough to draw you in, the truly unhinged cover should be. —JHM

[millions_email]

A Year in Reading: 2024

Welcome to the 20th (!) installment of The Millions' annual Year in Reading series, which gathers together some of today's most exciting writers and thinkers to share the books that shaped their year. YIR is not a collection of yearend best-of lists; think of it, perhaps, as an assemblage of annotated bibliographies. We've invited contributors to reflect on the books they read this year—an intentionally vague prompt—and encouraged them to approach the assignment however they choose.

In writing about our reading lives, as YIR contributors are asked to do, we inevitably write about our personal lives, our inner lives. This year, a number of contributors read their way through profound grief and serious illness, through new parenthood and cross-country moves. Some found escape in frothy romances, mooring in works of theology, comfort in ancient epic poetry. More than one turned to the wisdom of Ursula K. Le Guin. Many describe a book finding them just when they needed it.

Interpretations of the assignment were wonderfully varied. One contributor, a music critic, considered the musical analogs to the books she read, while another mapped her reads from this year onto constellations. Most people's reading was guided purely by pleasure, or else a desire to better understand events unfolding in their lives or larger the world. Yet others centered their reading around a certain sense of duty: this year one contributor committed to finishing the six Philip Roth novels he had yet to read, an undertaking that he likens to “eating a six-pack of paper towels.” (Lucky for us, he included in his essay his final ranking of Roth's oeuvre.)

The books that populate these essays range widely, though the most commonly noted title this year was Tony Tulathimutte’s story collection Rejection. The work of newly minted National Book Award winner Percival Everett, particularly his acclaimed novel James, was also widely read and written about. And as the genocide of Palestinians in Gaza enters its second year, many contributors sought out Isabella Hammad’s searing, clear-eyed essay Recognizing the Stranger.

Like so many endeavors in our chronically under-resourced literary community, Year in Reading is a labor of love. The Millions is a one-person editorial operation (with an invaluable assist from SEO maven Dani Fishman), and producing YIR—and witnessing the joy it brings contributors and readers alike—has been the highlight of my tenure as editor. I’m profoundly grateful for the generosity of this year’s contributors, whose names and entries will be revealed below over the next three weeks, concluding on Wednesday, December 18. Be sure to subscribe to The Millions’ free newsletter to get the week’s entries sent straight to your inbox each Friday.

—Sophia Stewart, editor

Becca Rothfeld, author of All Things Are Too Small

Carvell Wallace, author of Another Word for Love

Charlotte Shane, author of An Honest Woman

Brianna Di Monda, writer and editor

Nell Irvin Painter, author of I Just Keep Talking

Carrie Courogen, author of Miss May Does Not Exist

Ayşegül Savaş, author of The Anthropologists

Zachary Issenberg, writer

Tony Tulathimutte, author of Rejection

Ann Powers, author of Traveling: On the Path of Joni Mitchell

Lidia Yuknavitch, author of Reading the Waves

Nicholas Russell, writer and critic

Daniel Saldaña París, author of Planes Flying Over a Monster

Lili Anolik, author of Didion and Babitz

Deborah Ghim, editor

Emily Witt, author of Health and Safety

Nathan Thrall, author of A Day in the Life of Abed Salama

Lena Moses-Schmitt, author of True Mistakes

Jeremy Gordon, author of See Friendship

John Lee Clark, author of Touch the Future

Ellen Wayland-Smith, author of The Science of Last Things

Edwin Frank, publisher and author of Stranger Than Fiction

Sophia Stewart, editor of The Millions

A Year in Reading Archives: 2023, 2022, 2021, 2020, 2019, 2018, 2017, 2016, 2015, 2014, 2013, 2011, 2010, 2009, 2008, 2007, 2006, 2005

Breaking the Mold: Can Jeff Bezos Save the Washington Post?

1.

That yowl of pain you heard coming from the nation’s capital on Monday afternoon was the death cry of the print newspaper business as it was cut to the heart by the buyout of the Washington Post by Amazon CEO Jeff Bezos. Just forty years ago, during the Watergate scandal, the Post was an economic and cultural force potent enough to help take down a sitting president, and now it has itself been taken down by a guy who less than twenty years ago was working out of his garage selling books on the Internet.

The surprise $250 million deal has coup de grâce written all over it, and in newsrooms across the country, reporters and editors – those who still have jobs – will be grousing over the rich symbolism of one of the crown jewels of American journalism being snapped up by a mogul made rich by the very technology that killed the print business model. But once the grumbling dies down, those of us who care about journalism and culture may have to concede two obvious points. First, we damn well better hope Bezos succeeds where others have failed in figuring out how to produce professional-quality content in the digital age, not just for the sake of the Washington Post or even of the news business, but for the sake of cultural and artistic production in general. Second, of all the billionaires with the means to buy a major cultural institution like the Washington Post, Jeff Bezos might just be the one who can reinvent it for the 21st century.

The details of the Post deal are curious – and telling. For one thing, the newspaper’s parent company, which owns a diverse group of media and educational businesses including the Kaplan test prep company, is selling only its newspapers and holding onto its other businesses. For another, Bezos is buying the Post on his own, and won’t merge it into Amazon, the online retailing behemoth he still runs. Finally, Bezos has said he doesn’t intend to lay off employees and will keep Katherine Weymouth, granddaughter of legendary Post publisher and Washington powerbroker Katherine Graham, on as the newspaper’s publisher.

In other words, the Washington Post Company sees its signature property as a money-loser, and Bezos appears to be stepping in as a white knight to save a cultural institution from falling into disrepair. The history of these sorts of deals is mixed at best. After all, just three years ago the Post Company sold once-mighty Newsweek for a dollar to yet another billionaire, 92-year-old Sidney Harman, who brought in former New Yorker editor Tina Brown, with a plan to merge the aging print weekly with the website The Daily Beast. Harman, however, promptly died, and with the magazine hemorrhaging readers, Brown just last week severed ties between the website and the print magazine, selling Newsweek to start-up International Business Times.

As its purchase price suggests, the Washington Post is in far better shape than Newsweek was, but the paper’s core business of covering inside the Beltway news is under threat from websites like Politico. More importantly, the paper faces the same problem all legacy news organizations face, which is how to scale back its news operation to a level that is economically sustainable in a post-print era without doing fatal damage to the news gathering itself. To do that, though, requires a nuanced understanding of why the old business model failed in the first place.

2.

There are two versions of the story of why print newspapers bit the dust, one a tech-geek fantasy, the other a more prosaic business tale. In the tech-geek fantasy version, spread by the likes of digivangelist Clay Shirky in his 2008 book Here Comes Everybody, newspapers were beaten at their own game by bloggers and regular citizens armed with iPhones and laptops who were able to deliver news faster and more cheaply than the old print warhorses. Ironically, Shirky and others who advance this theory are laboring under the same misconception that has plagued news executives for the last twenty years – namely, the assumption that the principal business of a newspaper is gathering news. Newspapers don’t sell news. Rather, they give news away for free in order to maintain a distribution system for business information, most of which takes the form of paid ads. Newspapers remained as lucrative as they were for as long as they did because until the introduction of the web browser in 1994, nothing else offered cheap access to the millions of ordinary consumers who picked up the paper that landed on their front curb every morning.

Understanding this distinction helps explain why television hurt but did not kill newspapers. Television long ago entered more homes than newspapers ever did, and in many ways TV, which includes moving pictures and sound, is a better delivery device for news. But again, news isn’t the product for sale; advertising is, and by its nature, television can only effectively sell broad conceptual ideas that can be communicated visually in thirty seconds. You can use television to convince millions of Americans to shop at Safeway, but you can’t very well use TV to tell Americans about everything that's on sale that week at their neighborhood Safeway. And if you are trying to find a roommate or selling some old furniture, you can’t afford the thousands of dollars it would cost to run even a fifteen-second spot on a local station. For those kinds of tasks, you called up your local paper and bought a classified ad – until, that is, Craigslist and eBay came along and let people post those ads essentially for free.

To repeat: newspapers aren't dying because they're getting beat on news reporting. Newspapers are dying because the Internet separated the news content from the advertising revenue stream. For generations news executives thought they were selling news, while in fact they were selling a pipeline to consumers that companies and individuals paid to use. Now, the Internet itself is that pipeline, and we’re watching a wild scramble to see who will control it and the rafts of dollars flowing down its many tributaries. So far, tech giants like Apple, Facebook, Google, and, yes, Amazon, are winning that battle hands down.

This same battle, meanwhile, has been playing out across all forms of cultural and artistic expression. Twenty years ago, if a rock band wanted to find a wide audience for its music, it signed with a record label, which then recorded the band’s music and distributed it to stores across the country. Now, thanks to the ease of digital distribution, young musicians can bypass record labels and post their songs online for free. But if they want to make any real money from their work, they will almost certainly have to turn to Apple’s iTunes site and its direct pipeline to America’s ears.

A similar story has played out in the movie business, which only a few years ago could depend on DVDs rentals to make up revenue lost at the box office. Now, thanks to streaming services like Netflix, DVD rental fees have dried up and movie studios are madly turning out special-effects-laden comic-book serials in the hopes of winning over American teenagers and Chinese moviegoers, the last groups still consistently willing to pay to watch a movie in a theater (as long as it’s loud, violent, and not overly dependent on the subtleties of spoken English).

Books have been somewhat insulated from these disruptions because, so far, most readers still prefer physical books over e-books, but the terms of the battle are the same. Publishing firms, which have for generations paid writers to produce and editors to curate books are fighting tech giants Apple and Amazon, which view books primarily as loss leaders they can use to attract customers to their e-readers. So long as most readers continue to prefer printed books, publishing will limp along in its wounded state, rather like the news business after television but before the Internet. But there is a tipping point at which e-readers, and the recommendation engines controlled by the tech giants, could take over the curating role now played by publishing houses, thereby killing the publishing industry as we know it.

3.

All of which brings us back to Jeff Bezos and the Washington Post. Over the last twenty years, much of the money and power once held by content producers – newspapers, record labels, movie studios, publishing houses, etc. – has transferred to the tech giants that now control the digital pipelines to consumers. This means that it’s much easier for any individual artist or journalist to reach an audience, which is a great and good thing, but it also means that the tech giants controlling the pipelines are taking ever increasing shares of profits.

For the past decade or so, we have been enjoying a strange hangover period of the pre-digital age. A generation of journalists and artists trained in the dead-tree era, who have few other marketable skills, have continued producing art and journalism even though they are getting paid far less for their work than they used to. But every year more of these content producers are retiring or moving on, and we are entering a new period dominated by the first truly digital generation of bloggers and artists who are faced with the task of rebuilding the culture industries out of the ashes of the tech explosion.

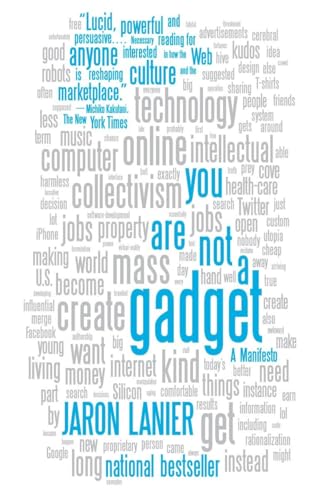

I and many others have argued that, so far at least, this generation has relied too heavily on memes and information derived from the legacy content producers. In journalism, this has meant hordes of bloggers feasting on an ever-shrinking supply of reported news from print-based news organizations. In film, this has meant kajillions of kids with camera phones riffing on existing story worlds, like Star Wars and Harry Potter, and uploading the results onto YouTube. As Jaron Lanier, a digital pioneer recently turned Internet skeptic, puts it in his 2011 book You Are Not a Gadget, we are a culture in danger of “effectively eating its own seed stock.”

Obviously, this cannot go on forever, but thus far the most powerful technological disrupters have shown little interest in investing in the content carried along their digital pipelines. Apple, with its market-making iPhone and iPad devices, sparked a creative revolution in the world of apps, but when it comes to cultural content like books, movies, and news, all the tech companies have done is made it cheaper and easier to get what you want, cutting deeply into the profit margins for the content producers in the process.

Amazon, which now controls a quarter of the book business, has of course played a huge role in this devaluing of cultural content, but in recent years Amazon has also quietly begun investing in content of its own. Since 2009, Amazon has launched imprints focusing on romance (Montlake Romance), thrillers (Thomas & Mercer), and sci-fi (47North), and now even general adult titles (New Harvest) and literary fiction (Little A). Compared with Amazon itself, these ventures are tiny, and they have run into trouble with rival booksellers like Barnes & Noble, which have refused to stock their titles. But whether these imprints succeed or fail, they demonstrate that Bezos has begun to wrap his mind around what it would mean if his company squeezed so much value out of the book business that publishing became in effect one long amateur hour.

So, is the Washington Post purchase a step in the same direction, an effort on Bezos's part to invest directly in the content that fuels his billion-dollar pipelines? The short answer is nobody knows. By all accounts the deal came together quickly, and it may well be that Bezos himself is unsure just what he wants to do with the Post. For a man worth $25.2 billion, as Bezos is, a $250 million newspaper truly can qualify as an impulse buy. Perhaps this is simply the billionaire’s answer to collecting old-fashioned typewriters. Let’s hope that’s not the case because whatever you may think of Bezos and others who broke the pre-Internet business model, the fact is it's broken – and who better to fix it than the man who helped break it in the first place?

Bezos, who has never worked in the news business, may be less attached to the dying print model than most print-news lifers and thus more willing to embrace digital-only innovations. As a man who has made his living tapping the powers of the interwebs, he may be better able to see that strict paywalls, which limit linking and bring in few dollars, are a dead end for most news organizations. As a CEO who recently bought out the reader hub GoodReads, he may be more open to recasting the newspaper as a community gathering spot, a sort of localized wiki combining conversation, community news, and event listings with ad revenues supporting a small, professional news staff. Most important, as a manager who has excelled at the long game, spending years investing in infrastructure for Amazon rather than diverting profits to shareholders, Bezos might be more willing to lose some money while figuring out how to marry news quality with profitability.

Or maybe not. Maybe the guy just wants a $250 million toy. But let’s hope not, because if that’s the case we stand to lose a lot more than a grand old newspaper that once helped take down a president.

The Bathrobe Era: What the Death of Print Newspapers Means for Writers

Today, April 30, marks the twentieth anniversary of my last day in the newsroom of a daily newspaper. In truth, my newspaper career was neither long nor particularly illustrious. For about four years in my early twenties I worked at two small newspapers: the Mill Valley Record, the decades-old weekly newspaper in my hometown that died a few years after I left; and the Aspen Daily News, which, miraculously, remains in business today. Still, I loved the newspaper business. I have never worked with better people than I did in that crazy little newsroom in Aspen, and I probably never will. I quit because it dawned on me that, while I was a good reporter, I had neither the skills nor the intestinal fortitude to follow in the footsteps of my heroes, investigative reporters like Bob Woodward and David Halberstam. What I couldn’t know the day I left the Daily News and began the long trek that led first to graduate school and then to college teaching was the sheer destructive power of the bullet I was dodging.

The Pew Research Center’s “State of the News Media 2012” report offers a sobering portrait of what has happened to print journalism in the twenty years since I left. After a small bump during the Clinton Boom of the 1990s, advertising revenue for America’s newspapers has fallen off a cliff in the past decade, dropping by more than half from a peak of $48.7 billion in 2000 to $23.9 billion in 2011. Thus far at least, online advertising isn’t saving the business as some hoped it might. Online advertising for newspapers was up $207 million between 2010 and 2011, but in that same period, print advertising was down $2.1 billion, meaning print losses outnumbered online gains by a factor of 10-1.

But as troubling as the death of print journalism may be for our collective civic and political lives, it may have an even more lasting impact on our literary culture. For more than a century, newspaper jobs provided vital early paychecks, and even more vital training grounds, for generations of American writers as different as Walt Whitman, Ernest Hemingway, Joyce Maynard, Hunter S. Thompson, and Tony Earley. Just as importantly, reporting jobs taught nonfiction writers from Rachel Carson to Michael Pollan how to ferret out hidden information and present it to readers in a compelling narrative.

Now, though, the infrastructure that helped finance all those literary apprenticeships is fast slipping away. The vacuum left behind by dying print publications has been largely filled by blogs, a few of them, like the Huffington Post and the Daily Beast, connected to huge corporations, many others written by bathrobe-clad auteurs like yours truly. This is great for readers who need only fire up their laptop – or increasingly, their tablet or smartphone – and have instant access to nearly all the information produced in the known world, for free.

But the system’s very efficiency is also its Achilles' heel. When I worked in newspapers, a good part of my paycheck came from sales of classified ads. That’s all gone now, thanks to Craigslist and eBay. We also were a delivery system for circulars from grocery stores and real estate firms advertising their best deals. Buh-bye. Display ads still exist online, but advertisers are increasingly directing their ad dollars to Google and Facebook, which do a much better job of matching ads to their users’ needs. Add to this the longer-term trend of locally owned grocery stores, restaurants, and clothing shops being replaced by national chains, which draw more business from nationwide TV ad campaigns, and the economic model that supported independent reporting for more than a hundred years has vanished.

Without a way to make a living from their work, most bloggers are hobbyists, and most hobbyists come at their hobby with an angle. So, you have realtor blogs that tout local real estate and inveigh against property taxes. Or you have historical preservation blogs that rail against any new construction. Or you have plain old cranks of the kind who used to hog the open discussion time at the beginning of local city council meetings, but now direct their rants, along with pictures, smart-phone videos, and links to other cranks in other cities, onto the Internet. What you don’t have is a lot of guys like I used to be, who couldn't care less about the outcome of the events they’re covering, but are being paid a living wage to present them accurately to readers.

The debate over the downsides of the Internet tends to focus on the consumer end, arguing, as Nicholas Carr does in his bestseller, The Shallows, that the Internet is making us dumber. That may or may not be true – I have my doubts – but as we near the close of the second decade of the Internet Era, we may be facing a far greater problem on the producer end: the atrophying of a central skill set necessary to great literature, that of taking off the bathrobe and going out to meet the people you are writing about. I mean to cast no generational aspersions toward the web-savvy writers coming up behind me, but having done both, I can tell you that blogging is nothing like reporting. Just about any fact you can find, or argument you can make, is available online, and with a few clicks of the mouse, anyone can sound like an expert on virtually any subject. And, because so far the blogosphere is, for the great majority of bloggers, quite nearly a pay-free zone, most bloggers are so busy earning a living at their real job, they have no time for old-fashioned shoe leather reporting even if they had the skill set.

But in the main, today’s younger bloggers don’t have those skills, because shoe-leather reporting isn’t all that useful in the Internet age. Reporting is slow. It’s analog. You call people up and talk to them for half an hour. Or you arrange a time to meet and talk for an hour and a half. It can take all day to report a simple human-interest story. To win eyeballs online, you have to be quick and you have to be linked. Read Gawker some time. Or Jezebel. Or even a site like Talking Points Memo. There’s some original reporting there, but more common are riffs on news stories or memes created by somebody else, often as not from television or the so-called “dead-tree media.” When there is an original piece online, often it comes from an author flacking for another, paying gig – a book, a business venture, a weight-loss program, a political career.

Clay Shirky, the NYU media studies professor and author of Here Comes Everybody, has suggested the crumbling of economic support for traditional print media and the original reporting it engendered is a temporary stage in the healthy process of creative destruction that goes along with the advent of any new game-changing technology. “The old stuff gets broken faster than the new stuff is put in its place,” Shirky is quoted as saying in The Pew Center’s “State of the News Media 2010” report.

Maybe Shirky is right and online news sites will discover an economic model to replace the classified pages and grocery-store ads, but as virtual reality pioneer Jaron Lanier points out in You Are Not A Gadget, we’ve been waiting a long time for the destruction to start getting creative. Lanier, who is more interested in music than writing, argues that for all the digi-vangelism about the waves of creativity that would follow the advent of musical file-sharing, what has happened so far is that music has gotten stuck in a self-reinforcing loop of sampling and imitation that has led to cultural stasis. “Where is the new music?” he asks. “Everything is retro, retro, retro.”

Lanier writes:

I have frequently gone through a conversational sequence along the following lines: Someone in his early twenties will tell me I don’t know what I’m talking about, and then I’ll challenge that person to play me some music that is characteristic of the late 2000s as opposed to the late 1990s. I’ll ask him to play the track for his friends. So far, my theory has held: even true fans don’t seem to be able to tell if an indie rock track or a dance mix is from 1998 or 2008, for instance.

I am certainly not the go-to guy on contemporary music, but, like Lanier, I fear we are creating a generation of riff artists, who see their job not as creating wholly new original projects but as commenting upon cultural artifacts that already exist. Whether you’re talking about rappers endlessly “sampling” the musical hooks of their forebears, or bloggers snarking about the YouTube video of Miami Heat star Shaquille O’Neal holding his nose on the bench after one of his teammates farted during the first quarter of a game against the Chicago Bulls, you are seeing a culture, as Lanier puts it, “effectively eating its own seed stock.”

Thus far this cultural Möbius strip hasn’t affected books to the same degree that it has the news media and music because, well, authors of printed books still get paid for having original ideas. (If you wonder why cyber evangelists like Clay Shirky keep writing books and magazine articles printed on dead trees, there’s your answer. Writing a book is a paid gig. Blogging is effectively a charitable donation to the cultural conversation, made in the hope that one’s donation will pay off in some other sphere, like, say, getting a book contract.) The recent U.S. government suit against Apple and book publishers over alleged price-fixing in the e-book market, which would allow Amazon to keep deeply discounting books to drive Kindle sales, suggests that authors can’t necessarily count on making a living from writing books forever. But even if by some miracle, books continue to hold their economic value as they move into the digital realm, the people who write them will still need a way to make a living – and just as importantly, learn how to observe and describe the world beyond their laptop screen – in the decade or so it takes a writer to arrive at a mature and original vision.

Try to imagine what would have become of Hemingway, that shell-shocked World War I vet, if he hadn’t found work on the Kansas City Star, and later, the job as a foreign correspondent for the Toronto Star that allowed him to move to Paris and raise a family. The same goes for a writer as radically different as Hunter S. Thompson, who was saved from a life of dissipation by an early job as a sportswriter for a local paper, which led to newspaper gigs in New York and Puerto Rico. All of his best books began as paid reporting assignments, and his genius, short-lived as it was, was to be able to report objectively on the madness going on inside his drug-addled head.

In 2012, we live in a bit of a false economy in that novelists and nonfiction writers in their thirties and forties are still just old enough to have begun their careers before content began to migrate online. Thus, we can thank magazines for training and paying John Jeremiah Sullivan, whose book of essays, Pulphead, consists largely of pieces written on assignment for GQ and Harper’s. We should also be thankful for Gourmet magazine, which, until it went under in 2009, sent novelist Ann Patchett on lavish, all-expenses-paid trips around the world, including one to Italy, where she did the research on opera singers that fueled her bestselling novel, Bel Canto. In a quirkier, but no less important way, we can thank glossy magazines for The Corrections by Jonathan Franzen, who supported himself by writing for Harper’s, The New Yorker, and Details during his long, dark night of the literary soul in the late 1990s before his breakout novel was published.

Those venues – most of them, anyway – still exist, but they are the top of the publishing heap, and the smaller, entry-level publications of the kind I worked for twenty years ago, are either dying or going online. Increasingly, my decision to leave journalism to enter an MFA program twenty years ago seems less a personal life choice than an act guided by very subtle, yet very powerful economic incentives. As paying gigs for apprentice writers continue to dwindle, apprentice writers are making the obvious economic choice and entering grad school, which, whatever its merits as a writing training program, at least has the benefit of possibly leading to a real, paying job – as a teacher of creative writing, which, as you may have noticed, is what most working literary writers do for a living these days.

Perhaps that is what people are really saying when they talk about the “MFA aesthetic,” that insular, navel-gazing style that has more to do with a response to previous works of fiction than to the world most non-writers live in. Perhaps the problem isn’t with MFA programs at all, but with the fact that, for most graduates of MFA programs, it’s the only training in writing they have. They haven’t done what any rookie reporter at any local newspaper has done, which is observed a scene – a city council meeting, a high school football game, a small-plane crash – and then written about it on the front page of a paper that everybody involved in that scene will read the next day. They haven’t had to sift through a complex, shifting set of facts – was that plane crash a result of equipment malfunction or pilot error? – and not only get the story right, but make it compelling to readers, all under deadline as the editor and a row of surly press guys are standing around waiting to fill that last hole on page one. They haven’t, in short, had to write, quickly, under pressure, for an audience, with their livelihood on the line.

It is, of course, pointless to rage against the Internet Era. For one thing, it is already here, and for another, the Web is, on balance, a pretty darn good thing. I love email and online news. I use Wikipedia every day. But we need to listen to what the Jaron Laniers of the world are saying, which is that we can choose what the Web of the future will look like. The Internet is not like the weather. It isn’t something that just happens to us. The Internet is merely a very powerful tool, one we can shape to our collective will, and the first step along that path is deciding what we value and being willing to pay for it.

Image via Wikimedia Commons

Gratuitous: How Sexism Threatens to Undermine the Internet

1.

In his book Here Comes Everybody, Clay Shirky explains why personal blogs and social networking sites can sometimes confound us. He argues that before the internet, it was easy to tell what was a broadcast and what was a private message. A television show was a broadcast -- a message meant for a large audience of people, a public message. A telephone call, on the other hand, was a private message, meant for one other person. On the internet, though, the difference between the two kinds of media is much smaller. Is a personal blog a public or a private communication? Is it meant for mass consumption by thousands or millions of people? Not typically, and yet it can be read, theoretically, by billions.

This blurring of the two types of media is so difficult to grasp that it's produced its own near-ubiquitous straw man argument, which blogger Jason Kottke calls "the breakfast question." It comes up whenever anyone writes about social media: "Why would I care what you ate for breakfast that morning?" Shirky's rebuttal to this is succinct:

"It's simple. They're not talking to you. We misread these seemingly inane posts because we're so unused to seeing written material in public that isn't intended for us. The people posting messages to one another in small groups are doing a different kind of communicating than people posting messages for hundreds or thousands of people to read.

I've been thinking about this particular idea a lot lately as it applies to Tumblr. For those who are unfamiliar with Tumblr, it's a blogging platform that categorizes posts into one form or another -- text, photo, chat, audio, video. It allows you to put out small bursts of content, which then goes into a feed. People can follow you, just as they can on Twitter, and they can "like" your posts and re-blog them. Tumblr offers a combination of Twitter's viral capabilities with a more customizable experience that allows for a tremendous level of personal expression.

I'm something of a Tumblr addict. It is the first thing I check in the morning -- before my email, before my Facebook page, but after I have some coffee (Some addictions are more powerful than others). What I love about it is the social interaction. I follow a large number of personal blogs that post funnier, more creative versions of "Here's what I had for breakfast." (I was following a blog that was, literally, about what people ate for breakfast, but I dropped it. I guess they weren't talking to me.) I also follow a bunch of themed blogs –The New Yorker Tumblr, for instance. They don't interact much with me, and that's fine. They're kind of like highly focused magazines, and I enjoy them accordingly.

But if that's all Tumblr was, I don't think it would be quite so important to me. It's the community that makes it special. Checking my Tumblr feed is like checking in with my friends, even if these "friends" are people I know very little about and will possibly never meet in real life. I met most of these people through friends of friends or via the social discovery that re-blogging affords. I somehow stumbled into their worlds, and they were interesting enough to make me want to come back. I interact with enough of them that I can pretty clearly say that when they post something, it is intended for me. I'm part of their small group, and I have no qualms about that.

Lisa, on the other hand, is a different matter. Lisa is a college student at a large university in the Midwest (and Lisa is not her name; I don't know whether she would want a bunch of book nerds suddenly reading her posts or not, so I'm not going to link to her blog here, either). She seems pretty smart, and she blogs about her love life, her schoolwork, her friends, and all of the other things that matter to her. I find Lisa's life very interesting, and her blog is great. But I haven't completely settled the "is she talking to me" question. While Lisa follows me back, we don't interact with each other. She uses Tumblr in a very social way, she isn't really part of the crowd of people whom I otherwise follow. And I find this somewhat troubling.

2.

At this point, I need to lay a few things on the table. First, I don't have a lot of close friends. My wife has several friends with whom she speaks on a regular basis. They talk about the things that are happening in their lives and how they feel about them. I don't have that. I'm a social person, and there are certainly people I love to have dinner with, meet at a party, etc., but ever since college that kind of close friendship has eluded me. And I think I'm okay with that, for the most part. But you could certainly argue that I use Tumblr to fill some void in my life, as pathetic as that might sound.

Also, Lisa is very attractive. And Tumblr has a way of encouraging people's vanity. On Wednesdays, for example, there's a tradition of posting a photo of yourself; this is known as Gratuitous Picture of Yourself Wednesday (GPOYW). This has the effect of sexualizing a lot of Tumblr blogs, to the point that my wife, Edan, hated it for months and months after I joined because she felt like every woman on it focused so much of her attention on her sexuality. I think she's probably right, though that was largely about who I was following (I used to run with a bad crowd, man). So let me just clear this up for you: I'm not following Lisa because she's hot or because I'm a perv. Let's be honest, if I wanted to look at 20 year-old girls, there are other places to do it; this is the internet we're talking about. Also, Edan, now on Tumblr, follows Lisa, too. We talk about her posts with each other. "She needs to dump that guy; he's bad news. He won't even hold her hand!" Edan will say. "He's a college kid. What do you expect?" I'll reply.

While I can't deny that gender plays a role here, that's not all there is to it. I like following her because, for whatever reason, her narrative is compelling. Following her blog is somewhat akin to watching a reality TV show (Not one of the ones where they try to out-dance each other or diet for money, but one that just follows someone's daily life). She's my Jersey Shore.

But of course, Lisa isn't a reality TV character, she's a real person. Yes, I know Snooki is real, too, but celebrities are different. The fact that Lisa could walk the streets of every city in the world with complete anonymity makes her situation fundamentally different from, well, The Situation's. There are different laws governing pictures of celebrities and real people. Celebrities belong to us -- the public -- in ways that private citizens do not. And treating real people, regular people, the same way we treat celebrities, is problematic. And let’s not forget that Snooki and her ilk are paid to be in the public eye and to put up with all that entails.

3.

A few weeks ago, I went to an performance exhibition by my friend, the artist Charlie White. It was called Casting Call, and according to its website it was meant to further explore "White's ongoing interest in the complexities of the American teen as cultural icon, image, and national idea." For the exhibition, an art gallery was converted into two rooms, each separated from the other by a pane of glass. On one side of the room was a casting call for teen girls exemplifying "the All American California girl" -- blonde hair, tan skin, etc. -- between the ages of 13 and 16. White and his crew interviewed the models, took a mug shot-style photograph of them, and then brought in the next girl. On the other side of the glass, an audience -- mostly art students and hipsters -- watched. Our friend Stephanie, White's partner, pointed out that everyone on our side of the glass was brunette (except, it must be pointed out, Edan) while all of the models were, of course, blonde. White and his crew discussed each girl, both amongst themselves and with the girl, as well, but we could hear none of it. We were left to interpret the scene for ourselves. "Oh, look, they're letting that girl look at the photo. They must really like her,” I said. "Yeah, either that or they could tell she was upset, and wanted to reassure her she did a good job."

A seemingly never-ending stream of girls came through the door. What fascinated me most about the entire exhibition is how quickly we could objectify the girls. I don't mean objectify them in the way that it's commonly used -- to turn them into sex objects -- though there was certainly a tinge of the erotic about the event; by objectify, I mean to make them into something not quite human, and in turn, to talk about them as though they were things rather than people. "She's too old." "I like that one, in the leopard-print shorts. She's my favorite." "Look at how weird her hair is. Why does she look like that?" It was how we talk about people when they're on television, but these people were merely a few feet away. The pane of glass, and the contrast between the brightly lit casting room and the dim audience space, was enough distance to effectively dehumanize these girls. There were other factors at work, such as the blonde California girl's status as marketing conceit and sexual totem, but I think a big reason we all felt free to dissect and dismiss these girls is because they couldn't really see us. We were, more or less, anonymous. It was especially unsettling to turn around after watching for a few minutes and see one of the girls who had been in the call standing just behind us. How long had she been there, the girl in the leopard print shorts? And how did she suddenly become so real?

4.

The internet is such a tricky place now that anonymity actually needs to be explained and defined. There are actually a couple of flavors of anonymity on the web, and each of them comes with different issues. The first kind of anonymity is the one most of us are familiar with online, the anonymous user or commenter. This user is indistinguishable from the other anonymous commenters, and they can occasionally make some useful contributions. Anonymity can allow people to be more playful than they would be normally, maybe a little bit sexier, a little bit funnier. But they can also just be thugs. This type of anonymous user crops up on nearly every blog post, and while they occasionally voice a particularly controversial opinion, they are usually there only to spew bile and throw insults at the author of the post. In the comments of this site, I once joked that "anonymous" is always such a badass (To which Max replied, "I'd like a t-shirt that says "Anonymous: Internet Badass.""). There's a reason why some sites disable anonymous commenting of this kind; having no identity carries no threat of consequences. Even if others ridicule your ideas and effectively send you back to your cave with your tail between your legs, nobody knows who "you" are, so you can return the next day to fight again.

There's a second, more nuanced type of anonymity that is possibly more prevalent than simple anonymous commenting, and that's the disguise of the pseudonym. Every message board has its trolls, those who enjoy causing trouble, dissenting from the norm, and generally putting others down. I've yet to encounter a community online that doesn't have at least one of these people. They are rarely truly anonymous, since most message boards, social sites, and other internet communities typically require a user name. Instead, these users hide behind a moniker -- sometimes employing the same user name on multiple sites. Having some sort of identity does create some consequences. Users can be banned from sites, ostracized, or otherwise punished for their behavior.

Often, though, this type of user can simply change his name. This is another form of what Jaron Lanier, in his book You Are Not a Gadget, calls "transient anonymity:"

People who can spontaneously invent a pseudonym in order to post a comment on a blog or on YouTube are often remarkably mean. Buyers and sellers on eBay are a little more civil, despite occasional disappointments, such as encounters with flakiness and fraud. Based on those data, you could conclude that it isn't exactly anonymity, but transient anonymity, coupled with a lack of consequences, that brings out online idiocy.

On Tumblr, most people interact via their blogs which necessarily have a name attached to them. This insures that people will be generally civil. It is also an opt-in system, where you have to choose who to follow, which I think adds to the welcoming feel of the platform. It takes a while to build up a following and to create a blog you can be proud of; why throw that all away by being a creep or a jerk? The value of the blogs themselves creates an added buffer against what Lanier calls "Drive-by anonymity."

But there's another element of Tumblr that I've seen cause some very disturbing encounters. Each Tumblr comes with the ability to enable a feature that allows others to ask you a question. It can also be used as a de facto messaging system. The user can then decide whether they want to post an answer to your question or delete it. The trouble starts when the user enables anonymous questions. Some people choose to leave anonymous questions enabled because it can lead to some very interesting content. For instance, if the user wrote a brave post about a disease they had, someone might leave an anonymous note about that, not wanting to reveal that they too have the disease. A more shallow but still amusing use is the frequent comment "I have a crush on you" or "I think you're beautiful," etc.

For every one such comment, there are dozens of vile, offensive comments, meant to do little other than demean the author of the blog and make them feel worse about themselves and their lives. For instance, I follow a woman who posts lots of photos of art, gorgeous film stills, great music, and, yes, sometimes pictures of herself. One day she put up the poster for the film The Girlfriend Experience, about a prostitute who spends the night with her clients, going to dinner or a movie before having sex for money. A day or two later, an anonymous person sent this message to her: "You look like you could give a pretty good "girlfriend experience." How about it? Ever given any thought to doing something like that?" My response to this post was, simply put, rage. I posted a response along the lines of "The rest of us are trying to have a civilization over here. Take that elsewhere." I was enraged that this person had used this feature of the blog to suggest that the blogger would make a good prostitute. Keep in mind that the author of this blog didn't have to make this public. I assume she did so (without comment) to shame the jerk who asked the question. But it's worth noting that there was no guarantee of attention from anyone beyond this one particular blogger. He did this solely to mess with, belittle, and intimidate the author of the blog. And he did so with impunity.

He wasn't alone. Every day, without fail, another person I follow posts a comment or question that an anonymous user asked them. These questions range from the classically juvenile ("I'm masturbating to you right now." "Take ur shirt off!") to more pointed personal assaults ("What's it like coping with your obvious addiction to sleeping pills?" "You post a lot of photos of yourself because your looks are the only thing you have going for you." "You're an obnoxious bitch who probably has no friends."). Not coincidentally, every one of these questions showed up on a blog written by a woman. So far, three bloggers that I follow have had to abandon their old online identities when creepy people began harassing them online. All of them were women.